Damn, the things used to be these thin little, well, cards. Nowadays they are reaching the size of entire consoles and can more accurately be called graphics bricks. Is the tech so stagnant that they won’t be getting smaller again in the future?

The high end ones are so huge, power hungry, and fucking expensive that I’m starting to think they might as well just come with an integrated CPU and system RAM (in addition to the VRAM) on the same board.

What is the general industry expectation of what GPUs are going to be like in the mid term future, maybe 20 to 30 years from now? I expect if AI continues to grow in scope and ubiquity, then a previously unprecedented amount of effort and funding is going to be thrown at R&D for these PC components that were once primarily relegated to being toys for gamers.

The card in the picture is of a kind that no longer exists: the basic, office computer GPU.

It got entirely displaced by integrated graphics.

So in a way they did get smaller, so small that they share a piece of silicon with the CPU. The only cards that remain are those that are so power hungry they can’t share power and cooling with the CPU.

GeForce 9500 GT was for basic, office computers in 2008?

To play Halo Online in between office meetings, and competitive bombsweeper

I found a dusty old office desktop in my parents garage a few months ago from circa 2008, and I noticed it had both an AMD and Nvidia sticker on it, so I decided to open it up to take a look at what card it had. When I got the thing open I was perplexed to find there was no card in it at all, and that single core Sempron wasn’t an APU, so what gives?

Found out that not only did integrated graphics exist back then on AMD platforms, but that they were built right into the motherboard and Nvidia manufactured them. It had no fan at all or really any obvious indication of its existence lol. Just an innocuous little piece of aluminum for a heat sink that was stuck on good to the chip with some kind of strong adhesive. Took a while to figure out that’s what that was. Didn’t know this was a thing, but that must’ve been a lot lower end than a 9500 GT.

Yes, it was the cheapest graphics card that could decode 1080p H.264 video in real time (and the acceleration worked in the Flash player). The 8500 GT could also do it but it was never popular. It made a huge difference when youtube became a thing.

According to cosecantphi below who opened a cheap low end office computer from 2008, it had integrated graphics and not a dedicated graphics card

I find it extremely hard to believe that schools and libraries and whatnot were building PC towers with dedicated graphic cards like 9500 GT, that was an exclusively gamer/performance nerd thing to do

Of course you had to have something to drive the VGA outputs. Usually this meant a VIA, SiS, or Unichrome chip in the motherboard. Those chips often had no 3D acceleration at all, and a max resolution of 1280x1024. You were lucky to have shaders instead of fixed-function pipelines in 2008-era integrated graphics, and hardware accelerated video decoding was unheard of. The best integrated GPUs were collaborations with nVidia that basically bundled a GPU with the mainboard, but those mainboards were expensive.

Windows Vista did not run well at all on these integrated chips, but nobody liked Windows Vista so it didn’t matter. After Windows 7 was released, Intel started bundling their “HD Graphics” on CPUs and the on-die integrated GPU trend got started. The card in the picture belongs to the interim time where the software demanded pixel shaders and high-resolution video but hardware couldn’t deliver.

They left a lot of work for the CPU to do: if you try to browse hexbear on them you can see the repainting going from top bottom as you scroll. You can’t play 720p video and do anything else with the computer at the same time, because the CPU is pegged. But if you put the 9500 GT on them then suddenly you can use the computer as a HTPC. It was not an expensive card, it was 60-80 USD, and it was a logical upgrade to a tower PC you already have to make it more responsive and enable it to play HD video.

this type of “expanding to fit the new constraints” is the central reason for human suffering, ecological damage, and everything else

The tech was mastered, so humans naturally started making bigger beefier resource hungry tech that consumed 3000% more resources to make their games look 30% better

If someone’s not okay with playing their games on 2007-era TF2 graphics I don’t consider them a real socialist. I’m not even saying such sacrifices would be necessary under socialism, but being unwilling to make that sacrifice is probably indicative that you won’t be compatible with it

If someone’s not okay with playing their games on 2007-era TF2 graphics I don’t consider them a real socialist.

I’m going to go a step forward and say we need to ban personal electronics eventually, phones, personal computers, tvs, game consoles. This entertainment is contributing to the alienation of the working class. If we force people to go into more communal spaces to access this media, like arcades, movie theaters, computer cafes. It would be more environmentally friendly and as an added bonus we can censor all reactionary content.

Of course, party officials shouldn’t be forced to obey these fucking laws. Fuck the peasants, it’s going to take a long time for the transition to communism to happen so it’s in the interests of the terminally online left such as ourselves to collaborate and share the spoils of corruption with eachother, we gotta live by a do as I say not as I do motto.

I’m going to go a step forward and say we need to ban personal electronics eventually, phones, personal computers, tvs, game consoles. This entertainment is contributing to the alienation of the working class. If we force people to go into more communal spaces to access this media, like arcades, movie theaters, computer cafes. It would be more environmentally friendly and as an added bonus we can censor all reactionary content.

I’m going to go a step further and dictate you wear a dirty ushanka at all times, do not shave, and only take cold sponge baths because hot running water is bourgeoisie decadence.

I hope every graphics card explodes and we have to go back to Sega Mega Drive

Sega does what nintendon’t

Nintendoes what Segan’t

i don’t think its getting much bigger than 4090. even 4090 has sagging issues. sure, there are brackets and vertical mounting but vertical mounts on most cases have three slots.

there are ways to get more gpu power, just have more GPUs. obviously not suitable for gaming (microstutters and all the issues SLI/Crossfires had) but for AI itll work just fine and is exactly whats going on

PC components that were once primarily relegated to being toys for gamers.

i’ve to disagree, GPUs always had uses other than gaming like video rendering and shit. Gamers were just the most marketed to.

My guess is we’re going to start seeing case and motherboard designs that privilege GPUs and relegate regular CPU function to a small system on a chip.

GPUs can get bigger if you build the system around them instead of plugging them in as an accessory.

My RX 7900xtx came with it’s own bracket to reduce sag, it’s honestly insane the heft some new cards have to them.

In 30 years they’ll find the remnants of computers and assume graphics cards were just funky portable heating units. Back then the planet still got cold after all, so people needed a way to stay warm.

Integrated cpu xD

I wouldn’t rule that out actually. NVIDIA did buy Mellanox so they could integrate Infiniband with NVIDIA GPUs more directly so you can have fancier GPU clusters. At that point, having a powerful CPU and associated infrastructure is kind of pointless. I guess they’ll end up looking like whatever SANs (storage area network, i.e. all the hard drives are separate from the compute servers) look like.

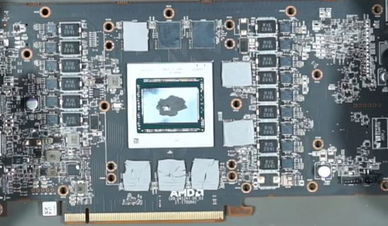

For consumer stuff, obviously idk. I think the giant GPUs are maybe because people are willing to buy them. The actual GPU itself provided by NVIDIA or AMD or Intel is actually a tiny little thing that looks like a CPU.

(GPU board without fans and plastic cover, you can see the GPU chip in the middle taking up a small part of the board)

And when they’re on a laptop, it’s just that actual GPU that’s soldered onto the motherboard.

So GPUs themselves aren’t actually getting that much bigger. I don’t know if it’s the memory, power, or the fans that are the cause of the massive GPU board sizes.

BmanUltima 18 points 7 months ago

Higher power consumption requires more cooling, and the easiest way to do that is to add more surface area.

I almost wonder if custom-built desktop computers will eventually go away. I feel like it would be a lot more efficient to just have the CPU and GPU soldered onto the same motherboard the same way a laptop would. Then they could both share a common cooling and power infrastructure.

And maybe liquid cooling will become more popular.

Maybe some day there will be a massive breakthrough in computer part cooling. But refrigerants are already a thing I guess, and that’s what liquid cooling is.

Look at how small a 4090 is without the heatsink:

Integrated cpu xD

Only Intel and AMD could do it any time soon, since licensing to make x86-64 CPUs will never let Nvidia build them

That’s only if it’s x86_64 and not ARM or RISC-V and only if NVIDIA is designing the CPU themselves which they wouldn’t do. Desktop/industrial motherboards just have a socket that fits a certain CPU pinout.

A lot of it’s heat dispersion, which goes back to how much electricity these things are using.

But the components are bigger, the first PC I assembled as a kid had 128 stream processors but the 40 series GPUs go up to 16384 cores. CUDA cores are a lot bigger too, despite die shrinks. More memory and associated components.

Me in 2010: Wow the GTX 480 is pretty big

Me now:

You can absolutely get smaller cards, they’re just less powerful.

Yup. GT 1030 and RX 550 are well within that size and price range.

I hope so because it’s funny

comically large PC part

PC components that were once primarily relegated to being toys for gamers.

somewhere along the way, they started marketing the whole PC as toys of gamers.

the graphic card will grow to become the size of small capybara while the home computer will shrink down until it is no longer visible to the human eye. the size will pose a problem as a capybara is bigger than a spec of dust. such is the contradiction of technological development under capitalism

All office computers need to have Wolfenstein 3D installed via Windows 3.1, so that modern dads can continue to engage with Take Your Child to Work Day, without having to do any actual parenting.

My understanding is that the primary reason for the size is heat limitations. The vast majority of the size on modern cards are heatsink radiator grills and fans.

I installed a 3080 in my friends pc ages ago and I was kinda like “damn is the port gonna hold this thing up?”

Lol, not sure if you’re even aware but: vertical mounting has become a big trend in PC enthusiasts due to this factor. I have one of these on my 4090 rig:

I wasn’t, haven’t really kept up too much with current trends bc tbh I can’t afford anything so I’m just vibing with what I got, but I’m totally not surprised to see that, it felt pretty dicey at the time and it’s my understanding that the 40 series are even larger lol.

Yeah the 3080 is a chonker, but the 4090 is just fucking huge, it’s like a mid sized console just sitting in your PC. It’s between a Wii and a PS2

I had to bend and break parts of my case to get my card to fit. I would need a bigger case if my next card were any bigger.