cross-posted from: https://programming.dev/post/177822

It’s coming along nicely, I hope I’ll be able to release it in the next few days.

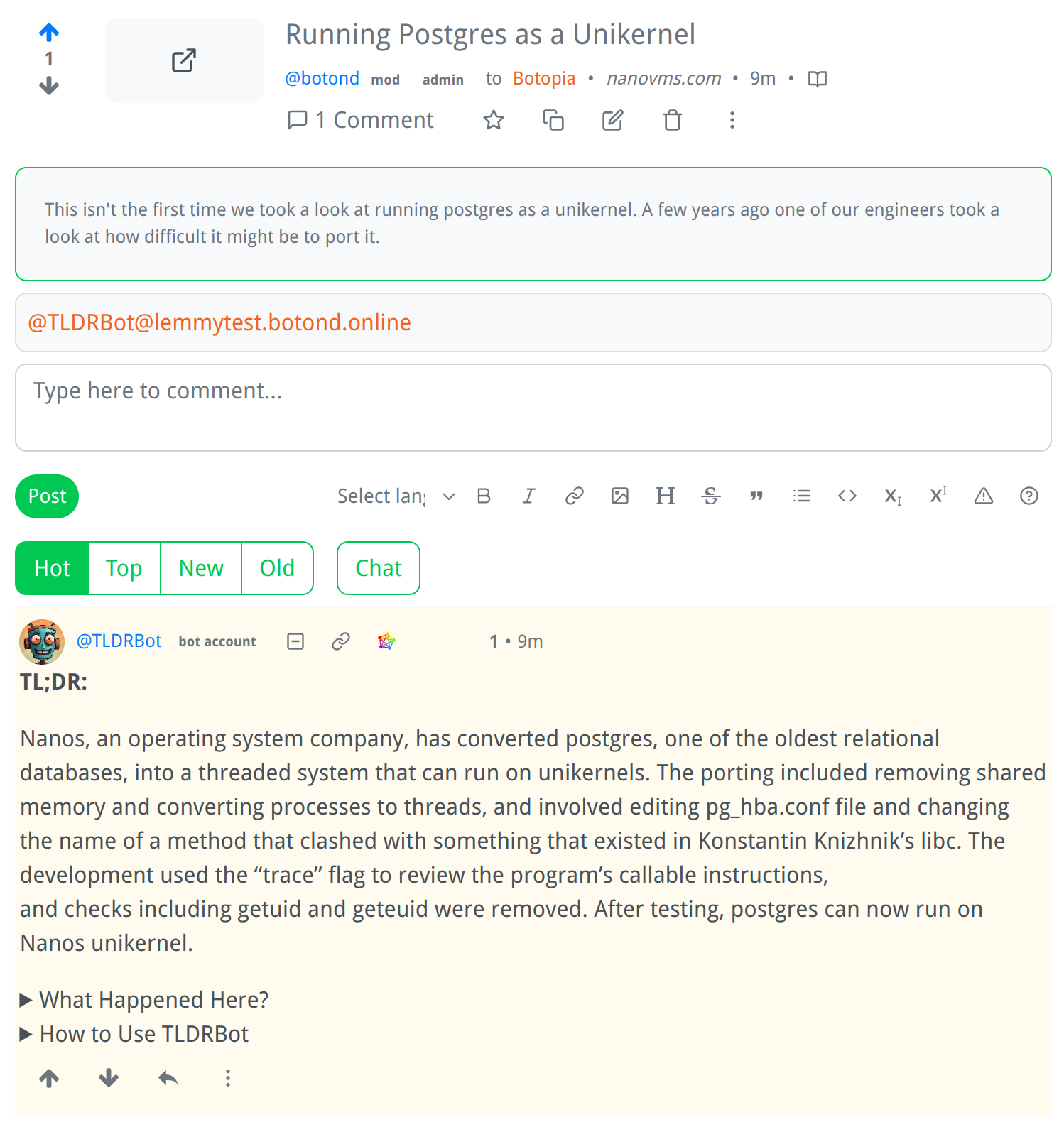

Screenshot:

How It Works:

I am a bot that generates summaries of Lemmy comments and posts.

- Just mention me in a comment or post, and I will generate a summary for you.

- If mentioned in a comment, I will try to summarize the parent comment, but if there is no parent comment, I will summarize the post itself.

- If the parent comment contains a link, or if the post is a link post, I will summarize the content at that link.

- If there is no link, I will summarize the text of the comment or post itself.

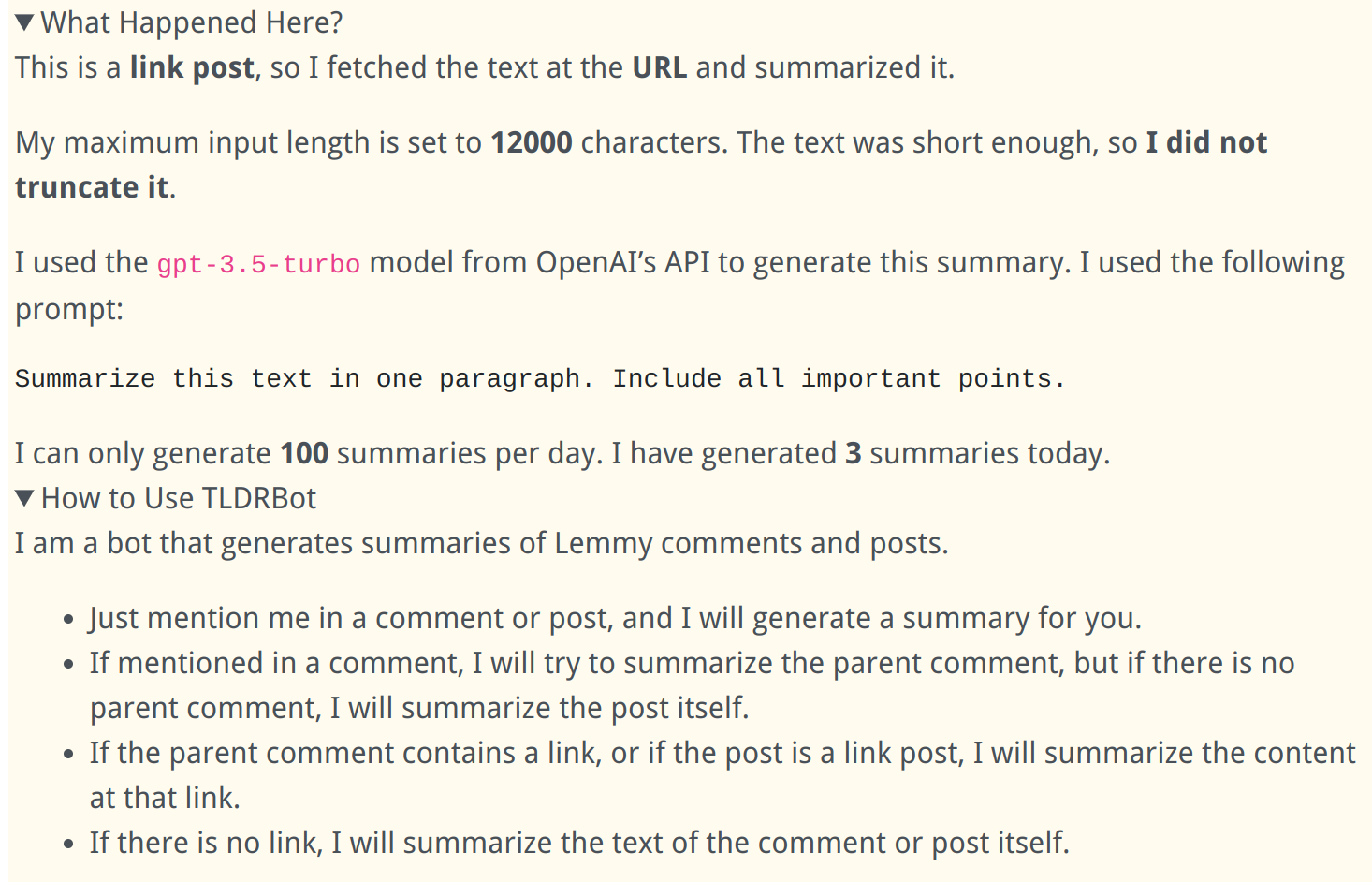

Extra Info in Comments:

Prompt Injection:

Of course it’s really easy (but mostly harmless) to break it using prompt injection:

It will only be available in communities that explicitly allow it. I hope it will be useful, I’m generally very satisfied with the quality of the summaries.

Will it work on all instances?

And how do you manage costs? Querying GPT isn’t that expensive but when many people use the bot costs might accumulate substantially

- Only on programming.dev, at least in the beginning, but it will be open source so anyone will be able to host it for themselves.

- I set up a hard limit of 100 summaries per day to limit costs. This way it won’t go over $20/month. I hope I will be able to increase it later.

I am very happy to hear that you will open source it!

I am curious - have you tested how well it handles a direct link to a scientific article in PDF format?

It only handles HTML currently, but I like your idea, thank you! I’ll look into implementing reading PDFs as well. One problem with scientific articles however is that they are often quite long, and they don’t fit into the model’s context. I would need to do recursive summarization, which would use much more tokens, and could become pretty expensive. (Of course, the same problem occurs if a web page is too long; I just truncate it currently which is a rather barbaric solution.)

Thanks for your response!

I imagined that this would be harder to pull off. There is also the added complexity that the layout contains figures and references… Sill, it’s pretty cool, I’ll keep an eye on this project, and might give self-hosting it a try once it’s ready!

LLMs can do a surprisingly good job even if the text extracted from the PDF isn’t in the right reading order.

Another thing I’ve noticed is that figures are explained thoroughly most of the time in the text so there is no need for the model to see them in order to generate a good summary. Human communication is very redundant and we don’t realize it.

Just curious because I was discussing this with someone else on here. Do you think it’s possible to create a tldw bot with chatgpt for YouTube videos as well?

It is definitely possible, at least for videos that have a transcript. There are tools to download the transcript which can be fed into an LLM to be summarized.

I tried it here with excellent results: https://programming.dev/post/158037 - see the post description!

See also the conversation: https://chat.openai.com/share/b7d6ac4f-0756-4944-802e-7c63fbd7493f

I used GPT-4 for this post, which is miles ahead of GPT-3.5, but it would be prohibitively expensive (for me) to use it for a publicly available bot. I also asked it to generate a longer summary with subheadings instead of a TLDR.

The real question is if it is legal to programmatically download video transcripts this way. But theoretically it is entire possible, even easy.

Oh, I’ve just realized that it’s also possible if the video doesn’t have a transcript. You can download the audio and feed it into OpenAI Whisper (which is currently the best available audio transcription model), and pass the transcript to the LLM. And Whisper isn’t even too expensive.

Not sure about the legality of it though.

Yes, you can use the transcription.

Will it work on all instances?

And how do you manage costs? Querying GPT isn’t that expensive but when many people use the bot costs might accumulate substantially

I hope it does not get too expensive. You might want to have a look at locally hosted models.

Can you do this with GPT? Is it free?

I looked into using locally hosted models for some personal projects, but they’re absolutely awful. ChatGPT 3.5, and especially 4 are miles above what local models can do at the moment. It’s not just a different game, the local model is in the corner not playing at all. Even the Falcon instruct, which is the current best rated model on HuggingFace.

Unfortunately the locally hosted models I’ve seen so far are way behind GPT-3.5. I would love to use one (though the compute costs might get pretty expensive), but the only realistic way to implement it currently is via the OpenAI API.

EDIT: there is also a 100 summaries / day limit I built into the bot to prevent becoming homeless because of a bot

By the way, in case it helps, I read that OpenAI does not use content submitted via API for training. Please look it up to verify, but maybe that can ease the concerns of some users.

Also, have a look at these hosted models, they should be way cheaper than OpenAI. I think that this company is related to StabilityAI and the guys from StableDiffusion and also openassistant.

There is also openassistant, but they don’t have an API yet. https://projects.laion.ai/Open-Assistant

Yes, they have promised explicitly not to use API data for training.

Thank you, I’ll take a look at these models, I hope I can find something a bit cheaper but still high-quality.

@TLDRBot

It doesn’t work yet, the screenshots are from a private test instance.

Yeah, but if the thing is that I don’t want my content to be sent to an AI? Your idea is very cool, but be sure to implement a way to detect if the user that posted the comment/post doesn’t have a #nobot tag in its profile description.

At this point you may as well not comment or post anything on the internet if you are worried about it, the horse has already left the gate…

Just because the theives have broken into your kitchen doesn’t mean you have to make them breakfast.

Just because some companies are abusive doesn’t mean you have to be too. Let’s try, at best, to respect the fundamentals.

Ya fair enough, if it can be avoided by one person then sure why not, it’s kinda like climate change though, one person isn’t really doing anything when it comes to a global scale, it’s corporations and large industry that are the problems, same with AI anything corporate or government controlled, well once again it doesn’t matter they won’t respect that

I’m a realist me posting this comment and basically anything I do on the internet, I see it as, it’s out there I can’t expect it to stay private, it sucks but that’s how it is

This is an excellent idea, and I’m not sure why people downvoted you. The bot library I used doesn’t support requesting the user profile, but I’m sure it can be fetched directly from the API. I will look into implementing it!

Thanks, it’s a good thing the creator isn’t as uptight as the comments.

What library did you use?

I implemented it. The feature will be available right from the start. The bot will reply this if the user has disabled it:

🔒 The author of this post or comment has the #nobot hashtag in their profile. Out of respect for their privacy settings, I am unable to summarize their posts or comments.

If you create a post on a public facing website, you give up your right prevent the content from being sent to AI.

Even if the tldr bot isn’t sending your content to AI, these AI models are ingesting everything on the internet.

No. Intellectual property. And that’s why licenses exist.

And btw, I publish on my own server, my property, so if I don’t want and state that an AI scans its content, in an automated way, it simply doesn’t have the right.

And I’m sure these AI models will respect your wishes.

deleted by creator

Oo looks pretty good mate, keen to see how it turns out

fyi someone else launched one a day ago; please see my suggestions to them in their ‘how the bot works’ thread in tldrbot@lemmy.world.

tldr: i am really not a fan of automated posting of automated plausibly-not-BS-but-actually-BS, which is what LLMs tend to produce, but I realize some people are. if you really want to try it, please make it very clear to every reader that it is machine-generated before they read the rest of the comment.

Thank you, that’s a reasonable suggestion, I added it to the comment template:

TL;DR: (AI-generated 🤖)