- cross-posted to:

- futurology@futurology.today

- cross-posted to:

- futurology@futurology.today

A private school in London is opening the UK’s first classroom taught by artificial intelligence instead of human teachers. They say the technology allows for precise, bespoke learning while critics argue AI teaching will lead to a “soulless, bleak future”.

The UK’s first “teacherless” GCSE class, using artificial intelligence instead of human teachers, is about to start lessons.

David Game College, a private school in London, opens its new teacherless course for 20 GCSE students in September.

The students will learn using a mixture of artificial intelligence platforms on their computers and virtual reality headsets.

Are there any measures in place to ensure the AI doesn’t just teach them hallucinated bullshit?

No.

The AI will be called GLaDOS

Are you still there?

“B is for Buy-n-Large, your very best friend.”

Imagine paying to send your child to private school and then they decide to pull this bullshit. Classic profit motivations.

The students will learn using a mixture of artificial intelligence platforms on their computers and virtual reality headsets.

Suspicions immediately confirmed that the principal is a complete fucking dipshit who just wants to chase whatever trends sound futuristic. What an awful person for putting kids through this garbage.

How can we use this AI quantum blockchain to educate kids in a more efficient way?

Marketing play to grab the money off of rich parents. There are still teachers, they are just proxied by “AI”. And there will also still be teachers monitoring. And there will still be teachers for certain topics.

So it’s teacherless, but with plenty of teachers.

This is the self checkout of learning. Requires the same amount of employees with the same skills as before, but wait, now it’s also worse!

This is bad on three levels. Don’t use AI:

- to output info, decisions or advice where nobody will check its output. Will anyone actually check if the AI is accurate at identifying why the kids aren’t learning? (No; it’s a teacherless class.)

- use AI where its outcome might have a strong impact on human lives. Dunno about you guys, but teens education looks kind like a big deal. /s

- where nobody will take responsibility for it. “I did nothing, the AI did it, not my fault”. School environment is all about that blaming someone else, now something else.

In addition to that I dug some info on the school. By comparing this map with this one, it seems to me that the target students of the school are people from one of the poorest areas of London, the Tower Hamlets borough. “Yay”, using poor people as guinea pigs /s

It’s a private school though, so I’d be cautious about assuming they’re poor kids.

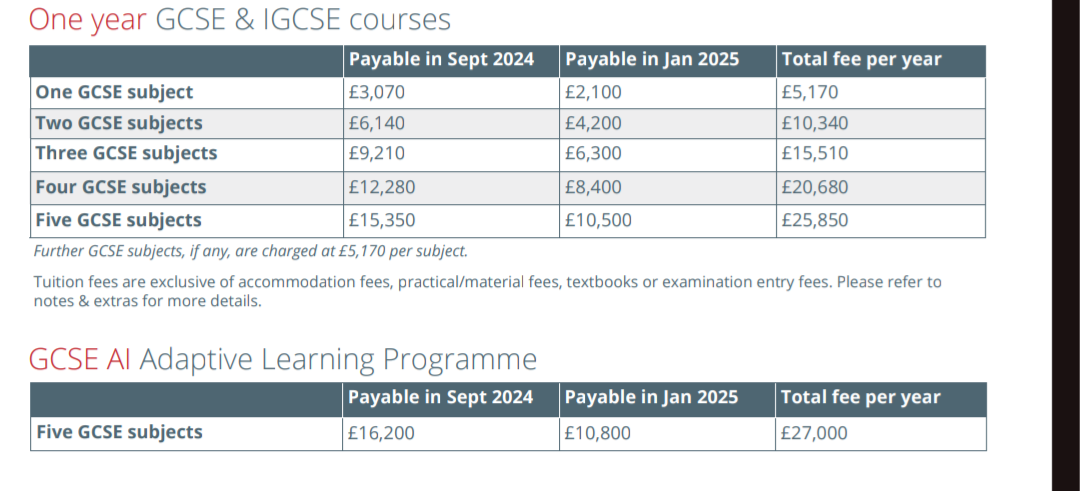

Edit: Yeah, it costs £27000!!!

Fair - my conclusion in this regard was incorrect then.

They’re still using children as guinea pigs though.

poor kids :/

I wonder if they’ll be able to sue for damages in the future? This is clearly a fucking idiotic idea that anyone with even the most basic understanding of AI would be able to tell you, so there’s no excuses like ‘Oh who could’ve forseen a generation of children raised on completely fake information could be so poorly led’ in 15 years time.

There is probably a forced arbitration clause and class action waver in the TOS…

You think that exists in the UK? I doubt. You definitely don’t get anything of that sort in the EU. A law is a law.

Lmao does anyone actually think this will have effective educational outcomes??

It potentially could, even better if it’s still supervised by an actual teacher but each children would have their own AI, so teaching subjects could be personalized. This could mean slow students can still catch up and have bigger chance understanding the said subjects.

If the AI doesn’t hallucinate incorrect information, I totally agree.

One size fits all classroom learning leaves many students behind, and having a personal AI tutor could really help kids fill in the gaps in their understanding that would otherwise be overlooked.

AI hallucinations is still a very real factor that limits the usefulness of this tech right now though. I magine coming into class and your tutor you had yesterday is confidently telling you the opposite of the fact that it taught you yesterday.

I’m very pro ai but this is a terrible idea.

Ignoring the fact that the tech is simply not there for this, how would an AI control the class? They will need a glorified baby sitter there at all times that could be simply teaching.

But I think the worst part of this is that certain kids still need individual attention even if they aren’t special needs and there is no way the AI will be able to pick up on that or act on it.

Recipe for disaster. The part about vr headsets is just icing on the cake.

But I think the worst part of this is that certain kids still need individual attention even if they aren’t special needs and there is no way the AI will be able to pick up on that or act on it.

Teachers already miss special needs students all the time. If anything, an AI’s pattern recognition will likely be more able to detect areas a student struggles in, because it can analyze a student’s individual performance in a sandbox. Teachers have dozens of students to keep track of at any given time, and it’s impossible for them to catch everything because we feeble humans have limited mental/emotional bandwidth, unlike our perfect silicon gods.

The truth is that this will actually do a lot of things better than real teachers. It’ll also do a lot of things worse. It’ll be interesting to see how the trade-off plays out and to see which elements of the project are successful enough to incorporate into traditional learning environments.

You make a fair point and a tool made specifically for this would probably be a real boon for teachers, but I doubt they incorporated it into their system.

I’m imagining something slapped together. Basically just an AI voice assistant rewording course material and able to receive voice inputs from students if they have questions. I doubt they even implemented voice recognition to differentiate between students.

Edit: I’m imagining it wrong, every student gets his own AI.

That said time will tell and if it shows a bit of promise, it will probably be useful for homework help and what not in the near future. It just seems early to be throwing it in a class. At least, it isn’t a public school where parents wouldn’t have a choice.

For what it’s worth, most AI tools being used in corporate environments aren’t generative AI like ChatGPT or Stable Diffusion. I very much doubt it will create new material, as much as control how the pre-written material is given to the students.

I went to a charter high school as a kid, and all our classes were done on computers. The teacher was in the room if you had questions that the software couldn’t answer, but otherwise everything was completely self-paced. I imagine the AI being used in this school is going to be similar, where all the materials are already vetted, and the algorithm determines how and when a student proceeds through the class. The article refers to the classrooms having “learning coaches”, who seem to serve the same purpose the teachers in my school did, as well.