Over just 14 days our physical disk usage has increased from 52% to 59%. That’s approximately 1.75 GB of disk space being gobbled up for unknown reasons.

At that rate, we’d be out of physical server space in 2 -3 months. Of course, one solution would be to double our server disk size which would double our monthly operating cost.

The ‘pictrs’ folder named ‘001’ is 132MB and the one named ‘002’ is 2.2GB. At first glance this doesn’t look like it’s an image problem.

So, we are stumped and don’t know what to do.

I had this EXACT problem. Truncate your docker logs. I did so when my storage filled up and it freed like 15 GB.

How long had your instance been running for the logs to reach 15 gigs?

About a year.

Thanks!

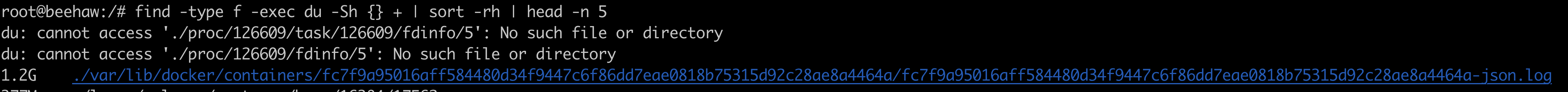

I’ve already done this by running the following command:

truncate -s 0 /var/lib/docker/containers//-json.log

Found the largest file on our server and have no clue what it is and why it is so fucking huge!

Looks like docker log files. We merged a commit to lemmy-ansible for a fix a while ago: https://github.com/LemmyNet/lemmy-ansible/pull/49

Will this fix be in the next release?

If you’re using lemmy-ansible, follow the instructions there for upgrading.

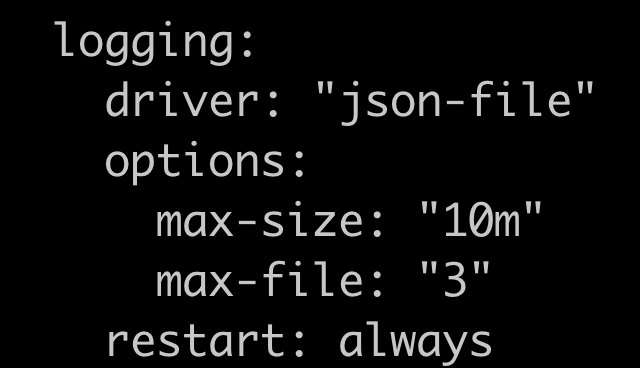

it’s just a change to the docker-compose.yml file. so depending on how your instance is setup (using ansible or docker-compose), you could just make the change yourself.

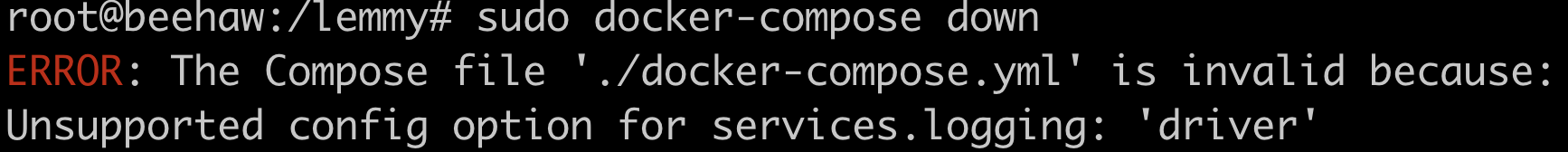

I tried changing the docker-compose.yml file and it didn’t work. It just threw some vague error.

What are those errors specifically, and could you post your docker-compose.yml?

Here’s the part that I’ve added to the docker-compose.yml:

Here’s the error:

it looks like from the error message that you maybe don’t have the correct amount of whitespace. here’s a snippet of mine:

services: lemmy: image: dessalines/lemmy:0.16.6 ports: - "127.0.0.1:8536:8536" - "127.0.0.1:6669:6669" restart: always environment: - RUST_LOG="warn,lemmy_server=info,lemmy_api=info,lemmy_api_common=info,lemmy_api_crud=info,lemmy_apub=info,lemmy_db_schema=info,lemmy_db_views=info,lemmy_db_views_actor=info,lemmy_db_views_moderator=info,lemmy_routes=info,lemmy_utils=info,lemmy_websocket=info" volumes: - ./lemmy.hjson:/config/config.hjson depends_on: - postgres - pictrs logging: options: max-size: "20m" max-file: "5"i also added the 4 logging lines for each service listed in my docker-compose.yml file. hope this helps!

I have no idea where you are getting that from, but it doesn’t match lemmy-ansible, or the PR I linked.

Also please do not screenshot text, just copy-paste the entire file so I can see what’s wrong with it.

i believe that’s just a regular docker log file. i don’t think by default that docker shrinks their log files, so it’s probably everything since you started your instance.

i’m just guessing though.

I also believe that by default docker does not shrink log files.

In the past I’ve used

—log-opt max-size=10mor something similar to have docker keep the logs at 10megsWould you mind sharing your code and where, precisely, to place it?

So 7% is 1,75Gb? In total you have only 25GB? I hope you don’t mind the naive question, but shouldn’t that still be super cheap?

Imagine lemmy federation was 10x the size it currently is. Would 17,5Gb per 2 weeks be okay? What about 100x? Why is the answer to throw money and space at the problem?

If I look at the list of communities and set it to all, I don’t see anywhere near the amount of content that should generate 1,750 mb in just 14 days.

If this is truly all content, and we’re struggling with this today, what does the fediverse look like in a year? Five years? What if it approaches 1/100th the size of Reddit? When does a significant cost to federate become a downside of joining the fediverse and the default becomes a whitelist instead of a blacklist?

If this is not content, holy shit that’s a lot of text generation. Did you know that 1,750 mb is equivalent to roughly 700,000,000 pages of text? Why is there even 1m pages of text being created in such a short timeframe given how small lemmy fediverse is?

ummm

might be the MLs

we talk

a lot

I would be surprised if the full content of all posts by every ML on Lemmy exceeded 1m pages. Text does not take up a lot of space.

tis a joke, sorry

It’s $6 per month for the 25GB and it would increase to $12 per month if we expanded to 50GB. We just don’t understand why our disk usage would increase so dramatically over only 14 days.