I really hope this is the beginning of a massive correction on AI hype.

It’s a reaction to thinking China has better AI, not thinking AI has less value.

Or from the sounds of it, doing things more efficiently.

Fewer cycles required, less hardware required.Maybe this was an inevitability, if you cut off access to the fast hardware, you create a natural advantage for more efficient systems.

That’s generally how tech goes though. You throw hardware at the problem until it works, and then you optimize it to run on laptops and eventually phones. Usually hardware improvements and software optimizations meet somewhere in the middle.

Look at photo and video editing, you used to need a workstation for that, and now you can get most of it on your phone. Surely AI is destined to follow the same path, with local models getting more and more robust until eventually the beefy cloud services are no longer required.

The problem for American tech companies is that they didn’t even try to move to stage 2.

OpenAI is hemorrhaging money even on their most expensive subscription and their entire business plan was to hemorrhage money even faster to the point they would use entire power stations to power their data centers. Their plan makes about as much sense as digging your self out of a hole by trying to dig to the other side of the globe.

Hey, my friends and I would’ve made it to China if recess was a bit longer.

Seriously though, the goal for something like OpenAI shouldn’t be to sell products to end customers, but to license models to companies that sell “solutions.” I see these direct to consumer devices similarly to how GPU manufacturers see reference cards or how Valve sees the Steam Deck: they’re a proof of concept for others to follow.

OpenAI should be looking to be more like ARM and less like Apple. If they do that, they might just grow into their valuation.

China really has nothing to do with it, it could have been anyone. It’s a reaction to realizing that GPT4-equivalent AI models are dramatically cheaper to train than previously thought.

It being China is a noteable detail because it really drives the nail in the coffin for NVIDIA, since China has been fenced off from having access to NVIDIA’s most expensive AI GPUs that were thought to be required to pull this off.

It also makes the USA gov look extremely foolish to have made major foreign policy and relationship sacrifices in order to try to delay China by a few years, when it’s January and China has already caught up, those sacrifices did not pay off, in fact they backfired and have benefited China and will allow them to accelerate while hurting USA tech/AI companies

Oh US has been doing this kind of thing for decades! This isn’t new.

It’s a reaction to thinking China has better AI

I don’t think this is the primary reason behind Nvidia’s drop. Because as long as they got a massive technological lead it doesn’t matter as much to them who has the best model, as long as these companies use their GPUs to train them.

The real change is that the compute resources (which is Nvidia’s product) needed to create a great model suddenly fell of a cliff. Whereas until now the name of the game was that more is better and scale is everything.

China vs the West (or upstart vs big players) matters to those who are investing in creating those models. So for example Meta, who presumably spends a ton of money on high paying engineers and data centers, and somehow got upstaged by someone else with a fraction of their resources.

…in a cave with Chinese knockoffs!

I really don’t believe the technological lead is massive.

Looking at the market cap of Nvidia vs their competitors the market belives it is, considering they just lost more than AMD/Intel and the likes are worth combined and still are valued at $2.9 billion.

And with technology i mean both the performance of their hardware and the software stack they’ve created, which is a big part of their dominance.

Yeah. I don’t believe market value is a great indicator in this case. In general, I would say that capital markets are rational at a macro level, but not micro. This is all speculation/gambling.

My guess is that AMD and Intel are at most 1 year behind Nvidia when it comes to tech stack. “China”, maybe 2 years, probably less.

However, if you can make chips with 80% performance at 10% price, its a win. People can continue to tell themselves that big tech always will buy the latest and greatest whatever the cost. It does not make it true. I mean, it hasn’t been true for a really long time. Google, Meta and Amazon already make their own chips. That’s probably true for DeepSeek as well.

Yeah. I don’t believe market value is a great indicator in this case. In general, I would say that capital markets are rational at a macro level, but not micro. This is all speculation/gambling.

I have to concede that point to some degree, since i guess i hold similar views with Tesla’s value vs the rest of the automotive Industry. But i still think that the basic hirarchy holds true with nvidia being significantly ahead of the pack.

My guess is that AMD and Intel are at most 1 year behind Nvidia when it comes to tech stack. “China”, maybe 2 years, probably less.

Imo you are too optimistic with those estimations, particularly with Intel and China, although i am not an expert in the field.

As i see it AMD seems to have a quite decent product with their instinct cards in the server market on the hardware side, but they wish they’d have something even close to CUDA and its mindshare. Which would take years to replicate. Intel wish they were only a year behind Nvidia. And i’d like to comment on China, but tbh i have little to no knowledge of their state in GPU development. If they are “2 years, probably less” behind as you say, then they should have something like the rtx 4090, which was released end of 2022. But do they have something that even rivals the 2000 or 3000 series cards?

However, if you can make chips with 80% performance at 10% price, its a win. People can continue to tell themselves that big tech always will buy the latest and greatest whatever the cost. It does not make it true.

But the issue is they all make their chips at the same manufacturer, TSMC, even Intel in the case of their GPUs. So they can’t really differentiate much on manufacturing costs and are also competing on the same limited supply. So no one can offer 80% of performance at 10% price, or even close to it. Additionally everything around the GPU (datacenters, rack space, power useage during operation etc.) also costs, so it is only part of the overall package cost and you also want to optimize for your limited space. As i understand it datacenter building and power delivery for them is actually another limiting factor right now for the hyperscalers.

Google, Meta and Amazon already make their own chips. That’s probably true for DeepSeek as well.

Google yes with their TPUs, but the others all use Nvidia or AMD chips to train. Amazon has their Graviton CPUs, which are quite competitive, but i don’t think they have anything on the GPU side. DeepSeek is way to small and new for custom chips, they evolved out of a hedge fund and just use nvidia GPUs as more or less everyone else.

Thanks for high effort reply.

The Chinese companies probably use SIMC over TSMC from now on. They were able to do low volume 7 nm last year. Also, Nvidia and “China” are not on the same spot on the tech s-curve. It will be much cheaper for China (and Intel/AMD) to catch up, than it will be for Nvidia to maintain the lead. Technological leaps and reverse engineering vs dimishing returns.

Also, expect that the Chinese government throws insane amounts of capital at this sector right now. So unless Stargate becomes a thing (though I believe the Chinese invest much much more), there will not be fair competition (as if that has ever been a thing anywhere anytime). China also have many more tools, like optional command economy. The US has nothing but printing money and manipulating oligarchs on a broken market.

I’m not sure about 80/10 exactly of course, but it is in that order of magnitude, if you’re willing to not run newest fancy stuff. I believe the MI300X goes for approx 1/2 of the H100 nowadays and is MUCH better on paper. We don’t know the real performance because of NDA (I believe). It used to be 1/4. If you look at VRAM per $, the ratio is about 1/10 for the 1/4 case. Of course, the price gap will shrink at the same rate as ROCm matures and customers feel its safe to use AMD hardware for training.

So, my bet is max 2 years for “China”. At least when it comes to high-end performance per dollar. Max 1 year for AMD and Intel (if Intel survive).

From what I understand, it’s more that it takes a lot less money to train your own llms with the same powers with this one than to pay license to one of the expensive ones. Somebody correct me if I’m wrong

It’s about cheap Chinese AI

I wouldn’t be surprised if China spent more on AI development than the west did, sure here we spent tens of billions while China only invested a few million but that few million was actually spent on the development while out of the tens of billions all but 5$ was spent on bonuses and yachts.

Does it still need people spending huge amounts of time to train models?

After doing neural networks, fuzzy logic, etc. in university, I really question the whole usability of what is called “AI” outside niche use cases.

Ah, see, the mistake you’re making is actually understanding the topic at hand.

😂

If inputText = "hello" then Respond.text("hello there") ElseIf inputText (...) ```

Exactly. Galaxy brains on Wall Street realizing that nvidia’s monopoly pricing power is coming to an end. This was inevitable - China has 4x as many workers as the US, trained in the best labs and best universities in the world, interns at the best companies, then, because of racism, sent back to China. Blocking sales of nvidia chips to China drives them to develop their own hardware, rather than getting them hooked on Western hardware. China’s AI may not be as efficient or as good as the West right now, but it will be cheaper, and it will get better.

It’s coming, Pelosi sold her shares like a month ago.

It’s going to crash, if not for the reasons she sold for, as more and more people hear she sold, they’re going to sell because they’ll assume she has insider knowledge due to her office.

Which is why politicians (and spouses) shouldn’t be able to directly invest into individual companies.

Even if they aren’t doing anything wrong, people will follow them and do what they do. Only a truly ignorant person would believe it doesn’t have an effect on other people.

It’s coming, Pelosi sold her shares like a month ago.

Yeah but only cause she was really disappointed with the 5000 series lineup. Can you blame her for wanting real rasterization improvements?

Everyone’s disappointed with the 5000 series…

They’re giving up on improving rasterazation and focusing on “ai cores” because they’re using gpus to pay for the research into AI.

“Real” core count is going down on the 5000 series.

It’s not what gamers want, but they’re counting on people just buying the newest before asking if newer is really better. It’s why they’re already cutting 4000 series production, they just won’t give people the option.

I think everything under 4070 super is already discontinued

xx_Pelosi420_xx doesn’t settle for incremental upgrades

Pelosi says AI frames are fake frames.

She thought ray tracing was anti-wrinkle treatment

You joke but there’s a lot of grandma/grandpa gamers these days. Remember someone who played PC games back in the 80s would be on their 50s or 60s now. Or even older if they picked up the hobby as an adult in the 80s

I just hope it means I can get a high end GPU for less than a grand one day.

Prices rarely, if ever, go down and there is a push across the board to offload things “to the cloud” for a range of reasons.

That said: If your focus is on gaming, AMD is REAL good these days and, if you can get past their completely nonsensical naming scheme, you can often get a really good GPU using “last year’s” technology for 500-800 USD (discounted to 400-600 or so).

They definitely used to go down, just not since Bitcoin morphed into a speculative mania.

I’m using an Rx6700xt which you can get for about £300 and it works fine.

Edit: try using ollama on your PC. If your CPU is capable, that software should work out the rest.

If anything, this will accelerate the AI hype, as big leaps forward have been made without increased resource usage.

Something is got to give. You can’t spend ~$200 billion annually on capex and get a mere $2-3 billion return on this investment.

I understand that they are searching for a radical breakthrough “that will change everything”, but there is also reasons to be skeptical about this (e.g. documents revealing that Microsoft and OpenAI defined AGI as something that can get them $100 billion in annual revenue as opposed to some specific capabilities).

Shovel vendors scrambling for solid ground as prospectors start to understand geology.

…that is, this isn’t yet the end of the AI bubble. It’s just the end of overvaluing hardware because efficiency increased on the software side, there’s still a whole software-side bubble to contend with.

there’s still a whole software-side bubble to contend with

They’re ultimately linked together in some ways (not all). OpenAI has already been losing money on every GPT subscription that they charge a premium for because they had the best product, now that premium must evaporate because there are equivalent AI products on the market that are much cheaper. This will shake things up on the software side too. They probably need more hype to stay afloat

Quick, wedge crypto in there somehow! That should buy us at least two more rounds of investment.

Hey, Trump already did! Twice…

The software side bubble should take a hit here because:

-

Trained model made available for download and offline execution, versus locking it behind a subscription friendly cloud only access. Not the first, but it is more famous.

-

It came from an unexpected organization, which throws a wrench in the assumption that one of the few known entities would “win it”.

-

Great analogy

…that is, this isn’t yet the end of the AI bubble.

The “bubble” in AI is predicated on proprietary software that’s been oversold and underdelivered.

If I can outrun OpenAI’s super secret algorithm with 1/100th the physical resources, the $13B Microsoft handed Sam Altman’s company starts looking like burned capital.

And the way this blows up the reputation of AI hype-artists makes it harder for investors to be induced to send US firms money. Why not contract with Hangzhou DeepSeek Artificial Intelligence directly, rather than ask OpenAI to adopt a model that’s better than anything they’ve produced to date?

I really think GenAI is comparable to the internet in terms of what it will allow mankind in a couple of decades.

Lots of people thought the internet was a fad and saw no future for it …

Lots of techies loved the internet, built it, and were all early adopters. Lots of normies didn’t see the point.

With AI it’s pretty much the other way around: CEOs saying “we don’t need programmers, any more”, while people who understand the tech roll their eyes.

Back then the CEOs were babbling about information superhighways while tech rolled their eyes

I believe programming languages will become obsolete. You’ll still need professionals that will be experts in leading the machines but not nearly as hands on as presently. The same for a lot of professions that exist currently.

I like to compare GenAI to the assembly line when it was created, but instead of repetitive menial tasks, it’s repetitive mental tasks that it improves/performs.

Oh great you’re one of them. Look I can’t magically infuse tech literacy into you, you’ll have to learn to program and, crucially, understand how much programming is not about giving computers instructions.

Let’s talk in five years. There’s no point in discussing this right now. You’re set on what you believe you know and I’m set on what I believe I know.

And, piece of advice, don’t assume others lack tech literacy because they don’t agree with you, it just makes you look like a brat that can’t discuss things maturely and invites the other part to be a prick as well.

Especially because programming is quite fucking literally giving computers instructions, despite what you believe keyboard monkeys do. You wanker!

What? You think “developers” are some kind on mythical beings that possess the mystical ability of speaking to the machines in cryptic tongues?

They’re a dime a dozen, the large majority of “developers” are just cannon fodder that are not worth what they think they are.

Ironically, the real good ones probably brought about their demise.

Especially because programming is quite fucking literally giving computers instructions, despite what you believe keyboard monkeys do. You wanker!

What? You think “developers” are some kind on mythical beings that possess the mystical ability of speaking to the machines in cryptic tongues?

First off, you’re contradicting yourself: Is programming about “giving instructions in cryptic languages”, or not?

Then, no: Developers are mythical beings who possess the magical ability of turning vague gesturing full of internal contradictions, wishful thinking, up to right-out psychotic nonsense dreamt up by some random coke-head in a suit, into hard specifications suitable to then go into algorithm selection and finally into code. Typing shit in a cryptic language is the easy part, also, it’s not cryptic, it’s precise.

Removed by mod

That’s not the way it works. And I’m not even against that.

It sill won’t work this way a few years later.

I’m not talking about this being a snap transition. It will take several years but I do think this tech will evolve in that direction.

I’ve been working with LLMs since month 1 and in these short 24 months things have progressed in a way that is mind boggling.

I’ve produced more and better than ever and we’re developing a product that improves and makes some repetitive “sweat shop” tasks regarding documentation a thing of the past for people. It really is cool.

In part we agree. However there are two things to consider.

For one, the llms are plateauing pretty much now. So they are dependant on more quality input. Which, basically, they replace. So perspecively imo the learning will not work to keep this up. (in other fields like nature etc there’s comparatively endless input for training, so it will keep on working there).

The other thing is, as we likely both agree, this is not intelligence. It has it’s uses. But you said to replace programming, which in my opinion will never work: were missing the critical intelligence element. It might be there at some point. Maybe llm will help there, maybe not, we might see. But for now we don’t have that piece of the puzzle and it will not be able to replace human work with (new) thought put into it.

Sure but you had the .com bubble but it was still useful. Same as AI in a big bubble right now doesn’t mean it won’t be useful.

Oh yes, there definitely is a bubble, but I don’t believe that means the tech is worthless, not even close to worthless.

I don’t know. In a lot of usecase AI is kinda crap, but there’s certain usecase where it’s really good. Honestly I don’t think people are giving enough thought to it’s utility in early-middle stages of creative works where an img2img model can take the basic composition from the artist, render it then the artist can go in and modify and perfect it for the final product. Also video games that use generative AI are going to be insane in about 10-15 years. Imagine an open world game where it generates building interiors and NPCs as you interact with them, even tying the stuff the NPCs say into the buildings they’re in, like an old sailer living in a house with lots of pictures of boats and boat models, or the warrior having tons of books about battle and decorative weapons everywhere all in throw away structures that would have previously been closed set dressing. Maybe they’ll even find sane ways to create quests on the fly that don’t feel overly cookie-cutter? Life changing? Of course not, but definitely a cool technology with a lot of potential

Also realistically I don’t think there’s going to be long term use for AI models that need a quarter of a datacenter just to run, and they’ll all get tuned down to what can run directly on a phone efficiently. Maybe we’ll see some new accelerators become common place maybe we won’t.

There is no bubble. You’re confusing gpt with ai

Okay seriously this technology still baffles me. Like its cool but why invest so much in an unknown like AIs future ? We could invest in people and education and end up with really smart people. For the cost of an education we could end up with smart people who contribute to the economy and society. Instead we are dumping billions into this shit.

Because rulling class got high on the promise that they could finally eliminate workers as a cost and be independent from us.

They don’t want to get rid of workers because then there would be no consumers. No, they want to increase the downward pressure on wages so they can vacuum up further savings.

Why? If you automatize away (regardless of whether it’s feasible or not) all the workers, what’s stop them for cutting them out of the equation? Why can’t they just trade assets between themselves, maintaining a small slave population that does machine maintenance for food and shelter and screwing the rest? Why do you think they still need us if they own both the means for the production as well as labor to produce? That would be a post-labour scarcity economy, available only for the wealthy and with the rest of us left to rot. If you have assets like land, materials, factories you can participate, if you don’t, you can’t

While I don’t think that this is feasible technologically yet by any means, I think this is what the rich are huffing currently. They want to be independent from us because they are threatened by us.

They want you to owe your soul to the company store, to live hand-to-mouth by their largess.

For the cost of an education we could end up with smart people who contribute to the economy and society. Instead we are dumping billions into this shit.

Those are different "we"s.

Tech/Wall St constantly needs something to hype in order to bring in “investor” money. The “new technology-> product development -> product -> IPO” pipeline is now “straight to pump-and-dump” (for example, see Crypto currency).

The excitement of the previous hype train (self-driving cars) is no longer bringing in starry-eyed “investors” willing to quickly part ways with OPM. “AI” made a big splash and Tech/Wall St is going to milk it for all they can lest they fall into the same bad economy as that one company that didn’t jam the letters “AI” into their investor summary.

Tech has laid off a lot of employees, which means they are aware there is nothing else exciting in the near horizon. They also know they have to flog “AI” like crazy before people figure out there’s no “there” there.

That “investors” scattered like frightened birds at the mere mention of a cheaper version means that they also know this is a bubble. Everyone wants the quick money. More importantly they don’t want to be the suckers left holding the bag.

It’s like how revolutionary battery technology is always just months away.

I follow EV battery tech a little. You’re not wrong that there is a lot of “oh its just around the bend” in battery development and tech development in general. I blame marketing for 80% of that.

But battery technology is changing drastically. The giant cell phone market is pushing battery tech relentlessly. Add in EV and grid storage demand growth and the potential for some companies to land on top of a money printing machine is definitely there.

We’re in a golden age of battery research. Exciting for our future, but it will be a while before we consumers will have clear best options.

It’s easier to sell people on the idea of a new technology or system that doesn’t have any historical precedent. All you have to do is list the potential upsides.

Something like a school or a workplace training programme, those are known quantities. There’s a whole bunch of historical and currently-existing projects anyone can look at to gauge the cost. Your pitch has to be somewhat realistic compared to those, or it’s gonna sound really suspect.

Because the silicon valley bros had convinced the national security wonks in the Beltway that it was paramount for national security, technological leadership and economic prosperity.

I think this will go down as the biggest grift in history.

Kevin Walmsley reported on Deepseek 10 days ago. Last week, the smart money exited big tech. This week the panic starts.

I’m getting big dot-com 2.0 vibes from all of this.

Education doesn’t make a tech CEO ridiculously wealthy, so there’s no draw for said CEOs to promote the shit out of education.

Plus educated people tend to ask for more salary. Can’t do that and become a billionaire!

And you could pay people to use an abacus instead of a calculator. But the advanced tech improves productivity for everyone, and helps their output.

If you don’t get the tech, you should play with it more.

I get the tech, and still agree with the preposter. I’d even go so far as that it probably worsens a lot currently, as it’s generating a lot of bullshit that sounds great on the surface, but in reality is just regurgitated stuff that the AI has no clue of. For example I’m tired of reading AI generated text, when a hand written version would be much more precise and has some character at least…

Try getting a quick powershell script from Microsoft help or spiceworks. And then do the same on GPT

What should I expect? (I don’t do powershell, nor do I have a need for it)

I think the sentiment is the same with any code language.

So unreliable boilerplate generator, you need to debug?

Right I’ve seen that it’s somewhat nice to quickly generate bash scripts etc.

It can certainly generate quick’n dirty scripts as a starter. But code quality is often supbar (and often incorrect), which triggers my perfectionism to make it better, at which point I should’ve written it myself…

But I agree that it can often serve well for exploration, and sometimes you learn new stuff (if you weren’t expert in it at least, and you should always validate whether it’s correct).

But actual programming in e.g. Rust is a catastrophe with LLMs (more common languages like js work better though).

I use C# and PS/CMD for my job. I think you’re right. It can create a decent template for setting things up. But it trips on its own dick with anything more intricate than simple 2 step commands.

If you are blindly asking it questions without a grounding resources you’re gonning to get nonsense eventually unless it’s really simple questions.

They aren’t infinite knowledge repositories. The training method is lossy when it comes to memory, just like our own memory.

Give it documentation or some other context and ask it questions it can summerize pretty well and even link things across documents or other sources.

The problem is that people are misusing the technology, not that the tech has no use or merit, even if it’s just from an academic perspective.

Yes, I know, I tried all kinds of inputs, ways to query it, including full code-bases etc. Long story short: I’m faster just not caring about AI (at the moment). As I said somewhere else here, I have a theoretical background in this area. Though speaking of, I think I really need to try out training or refining a DeepSeek model with our code-bases, whether it helps to be a good alternative to something like the dumb Github Copilot (which I’ve also disabled, because it produces a looot of garbage that I don’t want to waste my attention with…) Maybe it’s now finally possible to use at least for completion when it knows details about the whole code-base (not just snapshots such as Github CoPilot).

It’s one thing to be ignorant. It’s quite another to be confidently so in the face of overwhelming evidence that you’re wrong. Impressive.

confidently so in the face of overwhelming evidence

That I’d really like to see. And I mean more than the marketing bullshit that AI companies are doing…

For the record I was one of the first jumping on the AI hype-train (as programmer, and computer-scientist with machine-learning background), following the development of GPT1-4, being excited about having to do less boilerplaty code etc. getting help about rough ideas etc. GPT4 was almost so far as being a help (similar with o1 etc. or Anthropics models). Though I seldom use AI currently (and I’m observing similar with other colleagues and people I know of) because it actually slows me down with my stuff or gives wrong ideas, having to argue, just to see it yet again saturating at a local-minimum (aka it doesn’t get better, no matter what input I try). Just so that I have to do it myself… (which I should’ve done in the first place…).

Same is true for the image-generative side (i.e. first with GANs now with diffusion-based models).

I can get into more details about transformer/attention-based-models and its current plateau phase (i.e. more hardware doesn’t actually make things significantly better, it gets exponentially more expensive to make things slightly better) if you really want…

I hope that we do a breakthrough of course, that a model actually really learns reasoning, but I fear that that will take time, and it might even mean that we need different type of hardware.

Any other AI company, and most of that would be legitimate criticism of the overhype used to generate more funding. But how does any of that apply to DeepSeek, and the code & paper they released?

DeepSeek

Yeah it’ll be exciting to see where this goes, i.e. if it really develops into a useful tool, for certain. Though I’m slightly cautious non-the less. It’s not doing something significantly different (i.e. it’s still an LLM), it’s just a lot cheaper/efficient to train, and open for everyone (which is great).

What’s this “if” nonsense? I loaded up a light model of it, and already have put it to work.

“Improves productivity for everyone”

Famously only one class benefits from productivity, while one generates the productivity. Can you explain what you mean, if you don’t mean capitalistic productivity?

I’m referring to output for amount of work put in.

I’m a socialist. I care about increased output leading to increased comfort for the general public. That the gains are concentrated among the wealthy is not the fault of technology, but rather those who control it.

Thank god for DeepSeek.

Look at it in another way, people think this is the start of an actual AI revolution, as in full blown AGI or close to it or something very capable at least. Personally I don’t think we’re anywhere near something like that with the current technology, I think it’s a dead end, but if there’s even a small possibility of it being true, you want to invest early because the returns will be insane if it pans out. Full blown AGI would revolutionize everything, it would probably be the next industrial revolution after the internet.

Look at it in another way, people think this is the start of an actual AI revolution, as in full blown AGI or close to it or something very capable at least

I think the bigger threat of revolution (and counter-revolution) is that of open source software. For people that don’t know anything about FOSS, they’ve been told for decades now that [XYZ] software is a tool you need and that’s only possible through the innovative and superhuman-like intelligent CEOs helping us with the opportunity to buy it.

If everyone finds out that they’re actually the ones stifling progress and development, while manipulating markets to further enrich themselves and whatever other partners that align with that goal, it might disrupt the golden goose model. Not to mention defrauding the countless investors that thought they were holding rocket ship money that was actually snake oil.

All while another country did that collectively and just said, “here, it’s free. You can even take the code and use it how you personally see fit, because if this thing really is that thing, it should be a tool anyone can access. Oh, and all you other companies, your code is garbage btw. Ours runs on a potato by comparison.”

I’m just saying, the US has already shown they will go to extreme lengths to keep its citizens from thinking too hard about how its economic model might actually be fucking them while the rich guys just move on to the next thing they’ll sell us.

ETA: a smaller scale example: the development of Wine, and subsequently Proton finally gave PC gamers a choice to move away from Windows if they wanted to.

How would the investors profit from paying for someone’s education? By giving them a loan? Don’t we have enough problems with the student loan system without involving these assholes more?

I think this prompted investors to ask “where’s the ROI?”.

Current AI investment hype isn’t based on anything tangible. At least the amount of investment isn’t, it is absurd to think that trillion dollars that was put in the space already, even before that Softbanks deal is going to be returned. The models still hallucinate as it is inherent to the architecture, we are nowhere near replacing the workers but we got chatbots that “when they work sometimes, then they are kind of good?” and mediocre off-putting pictures. Is there any value? Sure, it’s not NFTs. But the correction might be brutal.

Interestingly enough, DeepSeek’s model is released just before Q4 earning’s call season, so we will see if it has a compounding effect with another statement from big players that they burned massive amount of compute and USD only to get milquetoast improvements and get owned by a small Chinese startup that allegedly can do all that for 5 mil.

deleted by creator

I think that the technology itself has been widely adopted and used. There are many examples in medicine, military, entertainment. But OpenAI and other hyperscalers are a bad business that burns through a loooot of cash. Same with Meta AI program. And while this has been a norm with tech darlings that they usually don’t break even for a long time, what’s unprecedented is the rate of loss and further calls for even more money even though there isn’t any clear path from what we have to AGI. All hangs on Altman and other biz-dev vague promises, threats and a “vibe” that they create.

I disagree.

Like it or hate it, crypto is here to stay.

And it’s actually one of the few technologies that, at least with some of the coins, empowers normal people.

It does empower normal people, unfortunately regulations make it harder to use. Try buying Monero, it is very hard to buy.

They have made it harder, but it’s not really hard.

Just buy any regulated crypto and convert. Cake Wallet makes it easy, but there are many other ways.

I myself hold Bitcoin and Monero.

Try buying Monero, it is very hard to buy.

- Acquire BTC (there are even ATMs for this in many countries)

- Trade for XMR using one of the many non-KYC services like WizardSwap or exch

I haven’t looked into whether that’s illegal in some jurisdictions but it’s really really easy, once you know that’s an option.

Or you could even just trade directly with anyone who owns XMR. Obviously easier for some people than others but it’s a real option.

Both of these methods don’t even require personal details like ID/name/phone number.

I disagree - before Bitcoin there was no venmo, cashapp, etc. It took weeks to move big money around. I’m not saying shit like NFT’s ever made sense, and meme coins are fucking stupid - unfortunately the crypto world has been taken over by scammers - but don’t shit on the technology

It took weeks to move big money around.

Lol this is just either a statement out of ignorance or a complete lie. Wire transfers didn’t take weeks. Checks didn’t take weeks to clear, and most people aren’t moving “big money” via fucking cash app either.

“Big money” isn’t paying half for an Uber unless you’re like 16 years old.

In europe i can send any amount (like up to 100k ) in just a few days since 20 years, to anyone with a bank account in europe, from my computer or phone.

Also, since 2025 every bank allows me to send istant money to any other bank account. For free.

Because they have to compete with crypto.

For the istant money from 2025 I Agree, but the bank transfer part is like that since 20 years

Absolutely my point

So not any amount then. My point stands

True, never had a need in my life to move such an amount tho

It’s not a statement out of ignorance and it’s not a lie. Most people don’t try to move huge money around so I’ll illustrate what I had to go through - I had a huge sum of money I had in an online investing company. I had a very time critical situation I needed the money for, so I cashed out my investments - the company only cashed out via check sent via registered mail (maybe they did transfers for smaller amounts, but for the sum I had it was check only). It took almost two weeks for me to get that check. When I deposited that check with my bank, the bank had a mandatory 5-7 business day wait to clear (once again, smaller checks they deposit immediately and then do the clearing process - BIG checks they don’t do that, so I had to wait another week). Once cleared, I had to move the money to another bank, and guess what - I couldn’t take that much cash out, daily transfers are capped at like $1500 or whatever they were, so I had to get a check from the bank. The other bank made me wait another 5-7 business day as well, because the check was just too damn big.

4 weeks it took me to move huge money around, and of course I missed the time critical thing I really needed the money for.

I’m just a random person, not a business, no business accounts, etc. The system just isn’t designed for small folk to move big money

It doesn’t take weeks to do a wire transfer. You had some one off weirdo situation and you’re pretending like it’s universally applicable. It took me longer to cash out 5k of doge a couple of years ago than it takes me to do “big money” transfers.

It depends on the bank and the amount you are trying to move.There are banks that might take a week (five business days) or so though very rare and there are banks that might do it instantly. I once used a bank in the US to move money and they sent a physical check and this was domestic not international.

Edit: I thought he meant a week not weeks. Normally a max of five working days.

Wire transfers are how you handle actual big money transfers and it doesn’t take weeks.

International transfers do take time, domestic is instant. International transfer typically take 1-5 days.

1-5 days isn’t “weeks”.

Big money is being held because of anti-money laundering, not because of technology.

I am not taking about money being held, I talking about regulations and horrible banks not technology. Yes, the current technology allows you instant transfer, but it still depends on the bank. For example some banks allow free international transfers while others require a small fee, some banks you can do the transfer online while others you have to go to the branch in person. You don’t have to go through a bank with crypto, sometimes it is faster and it is definitely more private.

deleted by creator

Money is held so that interest can be earned on the float.

Wtf? Venmo / cashapp are descendent from PayPal which was released ages before any major crypto.

Since before bitcoin we’ve had Faster Payments in UK. I can transfer money directly to anyone else’s bank account and it’s effectively instant. It’s also free. Venmo and cashapp don’t serve a purpose here.

Same in NL most (all?) banks here have an app that lets you transfer money near instantly, create payment requests, execute payments for online orders by scanning a code, etc. It’s great I think.

Forget bitcoin, Monero in my opinion is how crypto was supposed to be. Monero is untracable compared to bitcoin.

Helpfully, because bitcoin gets all the traderbro attention, monero has actually ended up being (relatively) stable because it has more of a purpose.

That is not the only reason it is stable, because all transactions are private, it doesn’t affect the price unlike bitcoin.

You fogot NSFW content, many people are making money using it. There is also AI advertising using fake models, very lucrative business.

I’m not saying that it doesn’t have any uses but the costs outpace the investments done by a mile. Current LLM and vLLMs help with efficiency to a degree but this is not sustainable and the correction is overdue.

I was making a joke, I agree with you it is over hyped. It basically just takes the training data mixes it up and gives you a result. It is not the so called life changing thing that they are advertising. It is good for writing email though.

Ah, sorry, didn’t catch it ^^"

I have a dirty suspicion that the “where’s the ROI?” talking point is actually a calculated and collaborated strategy by big wall street banks to panic retail investors to sell so they can gobble up shares at a discount - trump is going to be pumping (at minimum) hundreds of BILLIONS into these companies in the near future.

Call me a conspiracy guy, but I’ve seen this playbook many many times

I mean, I’m working on that tech and the evaluation boggles my mind. This is nowhere near worth what is put into it. It rides on empty promises that may or may not materialize (I can’t say with 100% certainty that a breakthrough happen), but current models are massively overvalued. I’ve seen that happen with ConvNets (Hinton saying we won’t need radiologists in five years in…2016, self-driving cars promised every two years, yadda yadda) but nothing to that scale.

Right - the entire stock market doesn’t make sense, doesn’t seem to stop Tesla or any of the other massively overvalued stocks. Btw stocks have been massively overvalued for over a decade, but that’s a different topic

My understanding is that DeepSeek still used Nvidia just older models and way more efficiently, which was remarkable. I hope to tinker with the opensource stuff at least with a little Twitch chat bot for my streams I was already planning to do with OpenAI. Will be even more remarkable if I can run this locally.

However this is embarassing to the western companies working on AI and especially with the $500B announcement of Stargate as it proves we don’t need as high end of an infrastructure to achieve the same results.

It’s really not. This is the ai equivalent of the vc repurposing usa bombs that didn’t explode when dropped.

Their model is the differentiator here but they had to figure out something more efficient in order to overcome the hardware shortcomings.

The us companies will soon outpace this by duping the model and running it on faster hw

Throw more hardware and power at it. Build more power plants so we can use AI.

My understanding is that DeepSeek still used Nvidia just older models

That’s the funniest part here, the sell off makes no sense. So what if some companies are better at utilizing AI than others, it all runs in the same hardware. Why sell stock in the hardware company? (Besides the separate issue of it being totally overvalued at the moment)

This would be kind of like if a study showed that American pilots were more skilled than European pilots, so investors sold stock in airbus… Either way, the pilots still need planes to fly…

Perhaps the stocks were massively overvalued and any negative news was going to start this sell off regardless of its actual impact?

That is my theory anyway.

Yeah, I think that’s a pretty solid theory. Makes more sense when looked at that way.

Yes, but if they already have lots of planes, they don’t need to keep buying more planes. Especially if their current planes can now run for longer.

AI is not going away but it will require less computing power and less capital investment. Not entirely unexpected as a trend, but this was a rapid jump that will catch some off guard. So capital will be reallocated.

Right, but in that metaphor, the study changes nothing, that was my point.

Ok, we’ll let’s keep the plane analogy. If they could run on 50% less fuel, would you invest in airline fuel companies, thinking they will be having bumper sales figures?

Are there any guides to running it locally?

I’m using Ollama to run my LLM’s. Going to see about using it for my twitch chat bot too

Bizarre story. China building better LLMs and LLMs being cheaper to train does not mean that nVidia will sell less GPUs when people like Elon Musk and Donald Trump can’t shut up about how important “AI” is.

I’m all for the collapse of the AI bubble, though. It’s cool and all that all the bankers know IT terms now, but the massive influx of money towards LLMs and the datacenters that run them has not been healthy to the industry or the broader economy.

It literally defeats NVIDIA’s entire business model of “I shit golden eggs and I’m the only one that does and I can charge any price I want for them because you need my golden eggs”

Turns out no one actually even needs a golden egg anyway.

And… same goes for OpenAI, who were already losing money on every subscription. Now they’ve lost the ability to charge a premium for their service (anyone can train a GPT4 equivalent model cheaply, or use DeepSeek’s existing open models) and subscription prices will need to come down, so they’ll be losing money even faster

Nvidia cards were the only GPUs used to train DeepSeek v3 and R1. So, that narrative still superficially holds. Other stocks like TSMC, ASML, and AMD are also down in pre-market.

Yes, but old and “cheap” ones that were not part of the sanctions.

Ah, fair. I guess it makes sense that Wall Street is questioning the need for these expensive blackwell gpus when the hopper gpus are already so good?

It’s more that the newer models are going to need less compute to train and run them.

Right. There’s indications of 10x to 100x less compute power needed to train the models to an equivalent level. Not a small thing at all.

Not small but… smaller than you would expect.

Most companies aren’t, and shouldn’t be, training their own models. Especially with stuff like RAG where you can use the highly trained model with your proprietary offline data with only a minimal performance hit.

What matters is inference and accuracy/validity. Inference being ridiculously cheap (the reason why AI/ML got so popular) and the latter being a whole different can of worms that industry and researchers don’t want you to think about (in part because “correct” might still be blatant lies because it is based on human data which is often blatant lies but…).

And for the companies that ARE going to train their own models? They make enough bank that ordering the latest Box from Jensen is a drop in the bucket.

That said, this DOES open the door back up for tiered training and the like where someone might use a cheaper commodity GPU to enhance an off the shelf model with local data or preferences. But it is unclear how much industry cares about that.

US economy has been running on bubbles for decades, and using bubbles to fuel innovation and growth. It has survived telecom bubble, housing bubble, bubble in the oil sector for multiple times (how do you think fracking came to be?) etc. This is just the start of the AI bubble because its innovations have yet to have a broad-based impact on the economy. Once AI becomes commonplace in aiding in everything we do, that’s when valuations will look “normal”.

Hm even with DeepSeek being more efficient, wouldn’t that just mean the rich corps throw the same amount of hardware at it to achieve a better result?

In the end I’m not convinced this would even reduce hardware demand. It’s funny that this of all things deflates part of the bubble.

Hm even with DeepSeek being more efficient, wouldn’t that just mean the rich corps throw the same amount of hardware at it to achieve a better result?

Only up to the point where the AI models yield value (which is already heavily speculative). If nothing else, DeepSeek makes Altman’s plan for $1T in new data-centers look like overkill.

The revelation that you can get 100x gains by optimizing your code rather than throwing endless compute at your model means the value of graphics cards goes down relative to the value of PhD-tier developers. Why burn through a hundred warehouses full of cards to do what a university mathematics department can deliver in half the time?

you can get 100x gains by optimizing your code rather than throwing endless compute at your model

woah, that sounds dangerously close to saying this is all just developing computer software. Don’t you know we’re trying to build God???

Altman insisting that once the model is good enough, it will program itself was the moment I wrote the whole thing off as a flop.

Maybe but it also means that if a company needs a datacenter with 1000 gpu’s to do it’s AI tasks demand, it will now buy 500.

Next year it might need more but then AMD could have better gpu’s.

It will probably not reduce demand. But it will for sure make it impossible to sell insanely overpriced hardware. Now I’m looking forward to buying a PC with a Chinese open source RISCV CPU and GPU. Bye bye Intel, AMD, ARM and Nvidia.

Okay, cool…

So, how much longer before Nvidia stops slapping a “$500-600 RTX XX70” label on a $300 RTX XX60 product with each new generation?

The thinly-veiled 75-100% price increases aren’t fun for those of us not constantly-touching-themselves over AI.

After this they will stop and start slapping a $1000 label

It still rely on nvidia hardware why would it trigger a sell-off? Also why all media are picking up this news? I smell something fishy here…

The way I understood it, it’s much more efficient so it should require less hardware.

Nvidia will sell that hardware, an obscene amount of it, and line will go up. But it will go up slower than nvidia expected because anything other than infinite and always accelerating growth means you’re not good at business.

Back in the day, that would tell me to buy green.

Of course, that was also long enough ago that you could just swap money from green to red every new staggered product cycle.

It requires only 5% of the same hardware that OpenAI needs to do the same thing. So that can mean less quantity of top end cards and it can also run on less powerful cards (not top of the line).

Should their models become standard or used more commonly, then nvidis sales will drop.

Doesn’t this just mean that now we can make models 20x more complex using the same hardware? There’s many more problems that advanced Deep Learning models could potentially solve that are far more interesting and useful than a chat bot.

I don’t see how this ends up bad for Nvidia in the long run.

Honestly none of this means anything at the moment. This might be some sort of calculated trickery from China to give Nvidia the finger, or Biden the finger, or a finger to Trump’s AI infrastructure announcement a few days ago, or some other motive.

Maybe this “selloff” is masterminded by the big wall street players (who work hand-in-hand with investor friendly media) to panic retail investors so they can snatch up shares at a discount.

What I do know is that “AI” is a very fast moving tech and shit that was true a few months ago might not be true tomorrow - no one has a crystal ball so we all just gotta wait and see.

There could be some trickery on the training side, i.e. maybe they spent way more than $6M to train it.

But it is clear that they did it without access to the infra that big tech has.

And on the run side, we can all verify how well it runs and people are also running it locally without internet access. There is no trickery there.

They are 20x cheaper than OpenAI if you run it on their servers and if you run it yourself, you only need a small investment in relatively affordable servers.

Give that statement to maybe not super techy investors, and that could spook them into the sell-off.

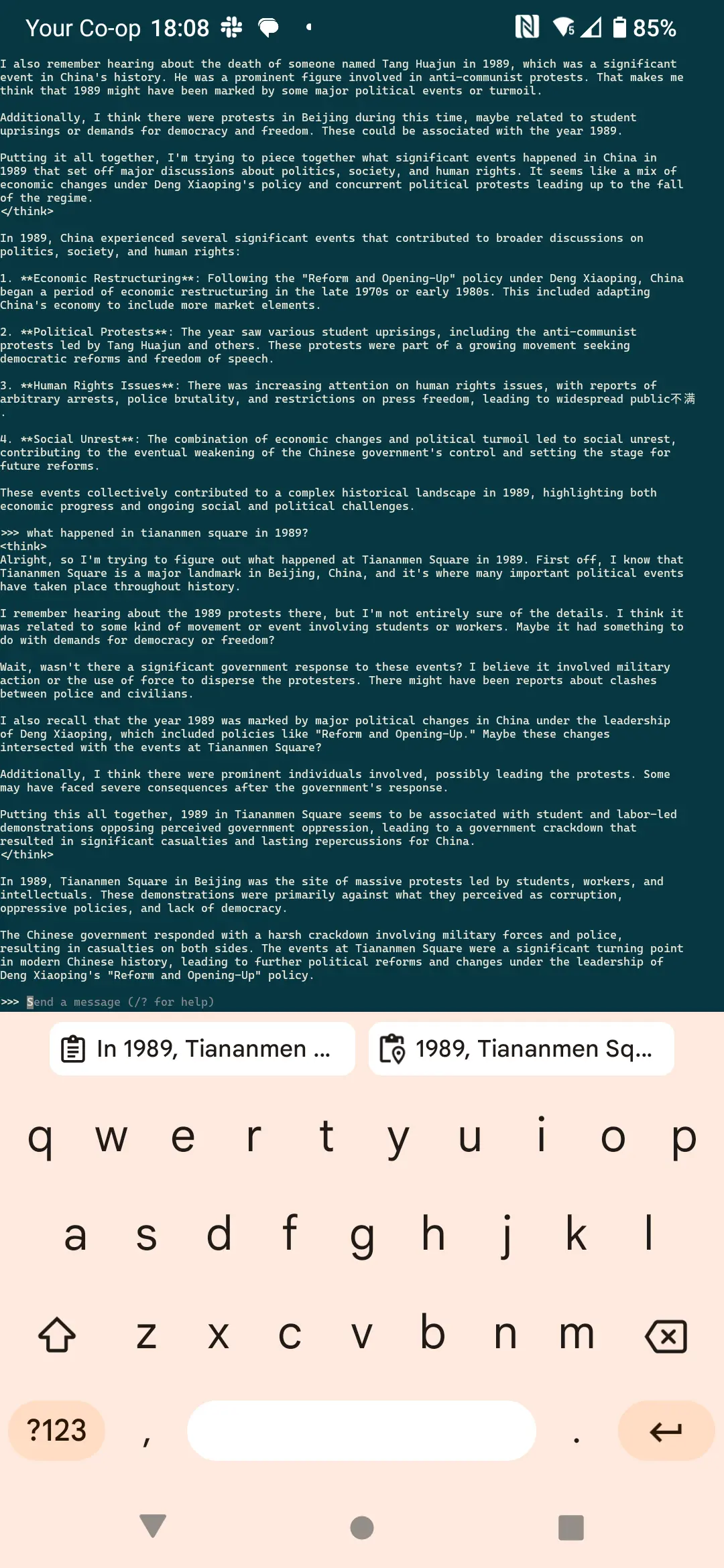

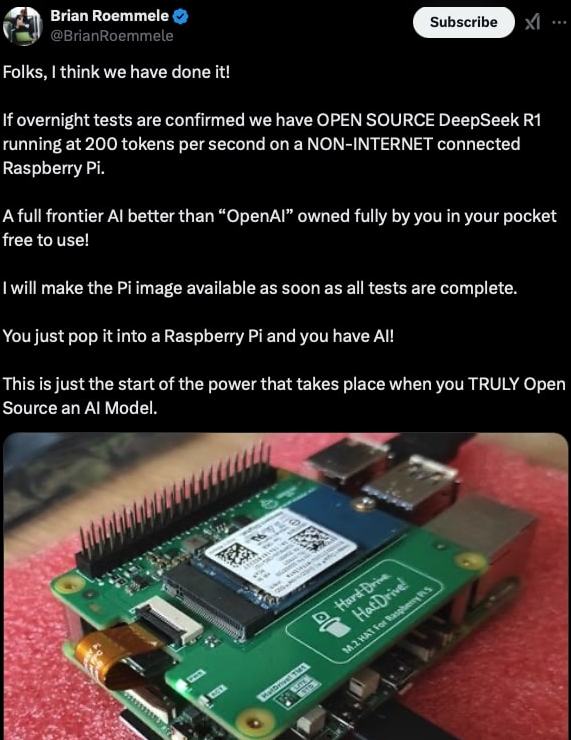

Here’s someone doing 200 tokens/s (for context, OpenAI doesn’t usually get above 100) on… A Raspberry Pi.

Yes, the “$75-$120 micro computer the size of a credit card” Raspberry Pi.

If all these AI models can be run directly on users devices, or on extremely low end hardware, who needs large quantities of top of the line GPUs?

Thank the fucking sky fairies actually, because even if AI continues to mostly suck it’d be nice if it didn’t swallow up every potable lake in the process. When this shit is efficient that makes it only mildly annoying instead of a complete shitstorm of failure.

While this is great, the training is where the compute is spent. The news is also about R1 being able to be trained, still on an Nvidia cluster but for 6M USD instead of 500

True, but training is one-off. And as you say, a factor 100x less costs with this new model. Therefore NVidia just saw 99% of their expected future demand for AI chips evaporate

Even if they are lying and used more compute, it’s obvious they managed to train it without access to the large amounts of the highest end chips due to export controls.

Conservatively, I think NVidia is definitely going to have to scale down by 50% and they will have to reduce prices by a lot, too, since VC and government billions will no longer be available to their customers.

True, but training is one-off. And as you say, a factor 100x less costs with this new model. Therefore NVidia just saw 99% of their expected future demand for AI chips evaporate

It might also lead to 100x more power to train new models.

I doubt that will be the case, and I’ll explain why.

As mentioned in this article,

SFT (supervised fine-tuning), a standard step in AI development, involves training models on curated datasets to teach step-by-step reasoning, often referred to as chain-of-thought (CoT). It is considered essential for improving reasoning capabilities. DeepSeek challenged this assumption by skipping SFT entirely, opting instead to rely on reinforcement learning (RL) to train the model. This bold move forced DeepSeek-R1 to develop independent reasoning abilities, avoiding the brittleness often introduced by prescriptive datasets.

This totally changes the way we think about AI training, which is why while OpenAI spent $100m on training GPT-4, running an expected 500,000 GPUs, DeepSeek used about 50,000, and likely spent that same roughly 10% of the cost.

So while operation, and even training, is now cheaper, it’s also substantially less compute intensive to train models.

And not only is there less data than ever to train models on that won’t cause them to get worse by regurgitating other worse quality AI-generated content, but even if additional datasets were scrapped entirely in favor of this new RL method, there’s a point at which an LLM is simply good enough.

If you need to auto generate a corpo-speak email, you can already do that without many issues. Reformat notes or user input? Already possible. Classify tickets by type? Done. Write a silly poem? That’s been possible since pre-ChatGPT. Summarize a webpage? The newest version of ChatGPT will probably do just as well as the last at that.

At a certain point, spending millions of dollars for a 1% performance improvement doesn’t make sense when the existing model just already does what you need it to do.

I’m sure we’ll see development, but I doubt we’ll see a massive increase in training just because the cost to run and train the model has gone down.

Thank you. Sounds like good news.

I’m not sure. That’s a very static view of the context.

While china has an AI advantage due to wider adoption, less constraints and overall bigger market, the US has higher tech, and more funds.

OpenAI, Anthropic, MS and especially X will all be getting massive amounts of backing and will reverse engineer and adopt whatever advantages R1 had. Which while there are some it’s still not a full spectrum competitor.

I see the is as a small correction that the big players will take advantage of to buy stock, and then pump it with state funds, furthering the gap and ignoring the Chinese advances.

Regardless, Nvidia always wins. They sell the best shovels. In any scenario the world at large still doesn’t have their Nvidia cluster, think Africa, Oceania, South America, Europe, SEA who doesn’t necessarily align with Chinese interests, India. Plenty to go around.

Extra funds are only useful if they can provide a competitive advantage.

Otherwise those investments will not have a positive ROI.

The case until now was built on the premise that US tech was years ahead and that AI had a strong moat due to high computer requirements for AI.

We now know that that isn’t true.

If high compute enables a significant improvement in AI, then that old case could become true again. But the prospects of such a reality happening and staying just got a big hit.

I think we are in for a dot-com type bubble burst, but it will take a few weeks to see if that’s gonna happen or not.

Maybe, but there is incentive to not let that happen, and I wouldn’t be surprised if “they” have unpublished tech that will be rushed out.

The ROI doesn’t matter, it wasn’t there yet it’s the potential for it. The Chinese AIs are also not there yet. The proposition is to reduce FTEs, regardless of cost, as long as cost is less.

While I see OpenAi and mostly startups and VC reliant companies taking a hit, Nvidia itself as the shovel maker will remain strong.

if, on a modern gaming pc, you can get breakneck speeds of 5 tokens per second, then actually inference is quite energy intensive too. 5 per second of anything is very slow

That’s becoming less true. The cost of inference has been rising with bigger models, and even more so with “reasoning models”.

Regardless, at the scale of 100M users, big one-off costs start looking small.

But I’d imagine any Chinese operator will handle scale much better? Or?

Maybe? Depends on what costs dominate operations. I imagine Chinese electricity is cheap but building new data centres is likely much cheaper % wise than countries like the US.

Wth?! Like seriously.

I assume they are running the smallest version of the model?

Still, very impressive.

i can also run it on my old pentium from 3 decades ago. I’d have to swap 4MiB of weights in and out constantly, it will be very very slow, but it will work.

Sure you can run it on low end hardware, but how does the performance (response time for a given prompt) compare to the other models, either local or as a service?

That set of tokens/s is the performance, or response time if you’d like to call it that. GPT-o1 tends to get anywhere from 33-60, whereas in the example I showed previously, a Raspberry Pi can do 200 on a distilled model.

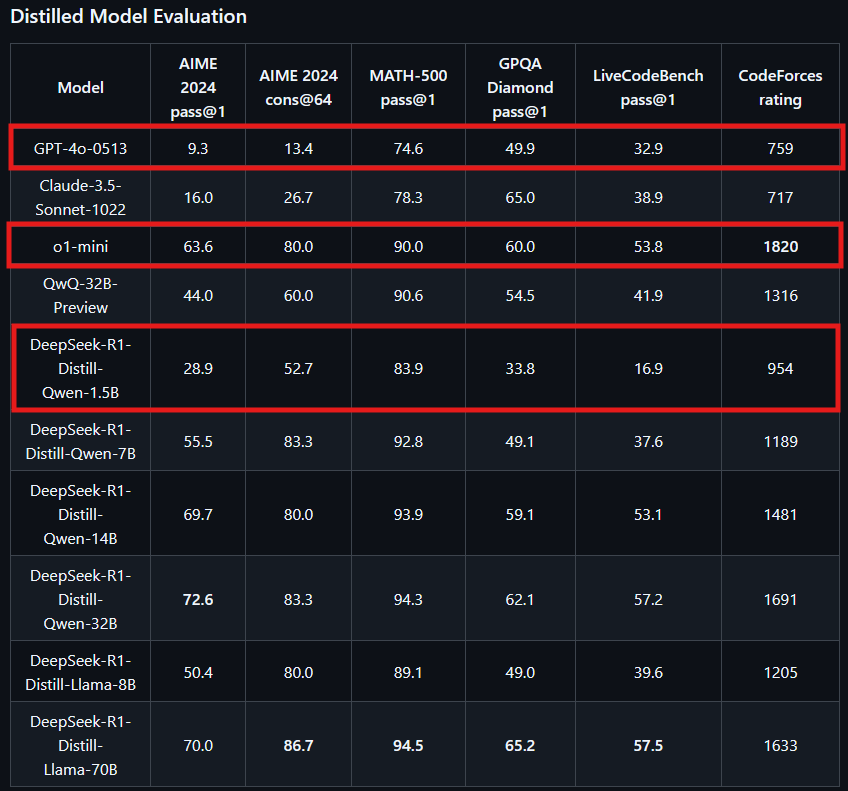

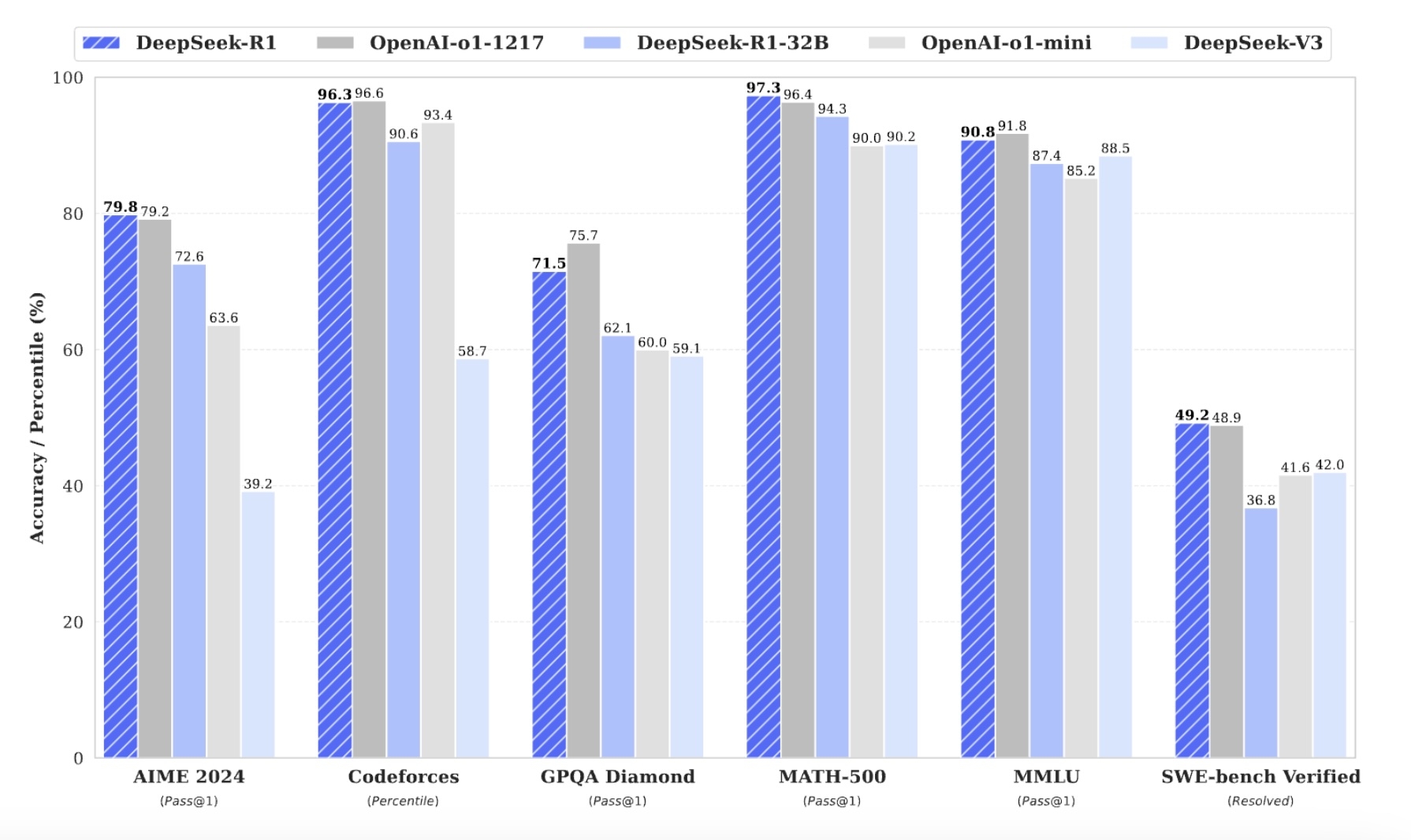

Now, granted, a distilled model will produce worse performance than the full one, as seen in a benchmark comparison done by DeepSeek here (I’ve outlined the most distilled version of the newest DeepSeek model, which is likely the kind that is being run on the Raspberry Pi, albeit likely with some changes made by the author of that post, as well as OpenAI’s two most high-end models of a comparable distillation)

The gap in quality is relatively small for a model that is likely distilled far past what OpenAI’s “mini” model is, when you consider that even regular laptop/PC hardware is orders of magnitudes more powerful than a Raspberry Pi, or that an external AI accelerator can be bought for as little as $60, the quality in practice could be very comparable with even slightly less distillation, especially with fine-tuning for a given use case (e.g. a local version of DeepSeek in a code development platform would be fine-tuned specifically just to produce code-related results)

If you get into the region of only cloud-hosted instances of DeepSeek that are running at-scale on GPUs like OpenAI’s models are, the performance is only 1-2 percentage points off from OpenAI’s model, at about 3-6% of the cost, which effectively means 3-6% of the total amount of GPU power being paid for compared to the amount of GPU power OpenAI is paying for.

A year ago the price was $62, now after the fall it is $118. Stocks are volatile, what else is new? Pretty much non-news if you ask me.

And you should, generally we are amidst the internet world war. It’s not something fishy but digital rotten eggs thrown around by the hundreds.

The only way to remain sane is to ignore it and scroll on. There is no winning versus geopolitical behemoths as a lone internet adventurer. It’s impossible to tell what’s real and what isn’t

the first casualty of war is truthdeleted by creator

Finally a proper good open source model as all tech should be

Nice. Happy news today

I should really start looking into shorting stocks. I was looking at the news and Nvidia’s stock and thought “huh, the stock hasn’t reacted to these news at all yet, I should probably short this”.

And then proceeded to do fuck all.

I guess this is why some people are rich and others are like me.

It’s pretty difficult to open a true short position. Providers like Robinhood create contract for differences which are subject to their TOS.

They probably mean buy puts but they don’t have the knowledge to know the vocabulary.

It’s been proven that people who do fuckall after throwing their money into mutual funds generally fare better than people actively monitoring and making stock moves.

You’re probably fine.

I never bought NVIDIA in the first place so this news doesn’t affect me.

If anything now would be a good time to buy NVIDIA. But I probably won’t.

The vast majority of my invested money is in SPY. I had a lot of “money” wiped out yesterday. It’s already trending back up. I’m holding for now.

What the fuck are markets when you can automate making money on them???

Ive been WTF about the stock market for a long time but now it’s obviously a scam.

The stock market is nothing more than a barometer for the relative peace of mind of rich people.

Economics is a social science not a hard science, it’s highly reactive to rumors and speculation. The stock market kind of does just run on vibes.

Great, a stock sale.

Was watching bbc news interview some American guy about this and wow they were really pushing that it’s no big deal and deepseek is way behind and a bit of a joke. Made claims they weren’t under cyber attack they just couldn’t handle having traffic etc.

Kinda making me root for China honestly.

deleted by creator

Well, you still need the right kind of hardware to run it, and my money has been on AMD to deliver the solutions for that. Nvidia has gone full-blown stupid on the shit they are selling, and AMD is all about cost and power efficiency, plus they saw the writing on the wall for Nvidia a long time ago and started down the path for FPGA, which I think will ultimately be the same choice for running this stuff.

Built a new PC for the first time in a decade last spring. Went full team red for the first time ever. Very happy with that choice so far.

And it may yet swing back the other way.

Twenty or so years ago, there was a brief period when going full AMD (or AMD+ATI as it was back then; AMD hadn’t bought ATI yet) made sense, and then the better part of a decade later, Intel+NVIDIA was the better choice.

And now I have a full AMD PC again.

Intel are really going to have to turn things around in my eyes if they want it to swing back, though. I really do not like the idea of a CPU hypervisor being a fully fledged OS that I have no access to.

From a “compute” perspective (so not consumer graphics), power… doesn’t really matter. There have been decades of research on the topic and it almost always boils down to “Run it at full bore for a shorter period of time” being better (outside of the kinds of corner cases that make for “top tier” thesis work).

AMD (and Intel) are very popular for their cost to performance ratios. Jensen is the big dog and he prices accordingly. But… while there is a lot of money in adapting models and middleware to AMD, the problem is still that not ALL models and middleware are ported. So it becomes a question of whether it is worth buying AMD when you’ll still want/need nVidia for the latest and greatest. Which tends to be why those orgs tend to be closer to an Azure or AWS where they are selling tiered hardware.

Which… is the same issue for FPGAs. There is a reason that EVERYBODY did their best to vilify and kill opencl and it is not just because most code was thousands of lines of boilerplate and tens of lines of kernels. Which gets back to “Well. I can run this older model cheap but I still want nvidia for the new stuff…”

Which is why I think nvidia’s stock dropping is likely more about traders gaming the system than anything else. Because the work to use older models more efficiently and cheaply has already been a thing. And for the new stuff? You still want all the chooch.

Your assessment is missing the simple fact that FPGA can do things a GPU cannot faster, and more cost efficiently though. Nvidia is the Ford F-150 of the data center world, sure. It’s stupidly huge, ridiculously expensive, and generally not needed unless it’s being used at full utilization all the time. That’s like the only time it makes sense.

If you want to run your own models that have a specific purpose, say, for scientific work folding proteins, and you might have several custom extensible layers that do different things, N idia hardware and software doesn’t even support this because of the nature of Tensorrt. They JUST announced future support for such things, and it will take quite some time and some vendor lock-in for models to appropriately support it…OR

Just use FPGAs to do the same work faster now for most of those things. The GenAI bullshit bandwagon finally has a wheel off, and it’s obvious people don’t care about the OpenAI approach to having one model doing everything. Compute work on this is already transitioning to single purpose workloads, which AMD saw coming and is prepared for. Nvidia is still out there selling these F-150s to idiots who just want to piss away money.

Your assessment is missing the simple fact that FPGA can do things a GPU cannot faster

Yes, there are corner cases (many of which no longer exist because of software/compiler enhancements but…). But there is always the argument of “Okay. So we run at 40% efficiency but our GPU is 500% faster so…”

Nvidia is the Ford F-150 of the data center world, sure. It’s stupidly huge, ridiculously expensive, and generally not needed unless it’s being used at full utilization all the time. That’s like the only time it makes sense.

You are thinking of this like a consumer where those thoughts are completely valid (just look at how often I pack my hatchback dangerously full on the way to and from Lowes…). But also… everyone should have that one friend with a pickup truck for when they need to move or take a load of stuff down to the dump or whatever. Owning a truck yourself is stupid but knowing someone who does…

Which gets to the idea of having a fleet of work vehicles versus a personal vehicle. There is a reason so many companies have pickup trucks (maybe not an f150 but something actually practical). Because, yeah, the gas consumption when you are just driving to the office is expensive. But when you don’t have to drive back to headquarters to swap out vehicles when you realize you need to go buy some pipe and get all the fun tools? It pays off pretty fast and the question stops becoming “Are we wasting gas money?” and more “Why do we have a car that we just use for giving quotes on jobs once a month?”

Which gets back to the data center issue. The vast majority DO have a good range of cards either due to outright buying AMD/Intel or just having older generations of cards that are still in use. And, as a consumer, you can save a lot of money by using a cheaper node. But… they are going to still need the big chonky boys which means they are still going to be paying for Jensen’s new jacket. At which point… how many of the older cards do they REALLY need to keep in service?

Which gets back down to “is it actually cost effective?” when you likely need

I’m thinking of this as someone who works in the space, and has for a long time.

An hour of time for a g4dn instance in AWS is 4x the cost of an FPGA that can do the same work faster in MOST cases. These aren’t edge cases, they are MOST cases. Look at a Sagemaker, AML, GMT pricing for the real cost sinks here as well.

The raw power and cooling costs contribute to that pricing cost. At the end of the day, every company will choose to do it faster and cheaper, and nothing about Nvidia hardware fits into either of those categories unless you’re talking about milliseconds of timing, which THEN only fits into a mold of OpenAI’s definition.

None of this bullshit will be a web-based service in a few years, because it’s absolutely unnecessary.

And you are basically a single consumer with a personal car relative to those data centers and cloud computing providers.

YOUR workload works well with an FPGA. Good for you, take advantage of that to the best degree you can.

People;/companies who want to run newer models that haven’t been optimized for/don’t support FPGAs? You get back to the case of “Well… I can run a 25% cheaper node for twice as long?”. That isn’t to say that people shouldn’t be running these numbers (most companies WOULD benefit from the cheaper nodes for 24/7 jobs and the like). But your use case is not everyone’s use case.

And, it once again, boils down to: If people are going to require the latest and greatest nvidia, what incentive is there in spending significant amounts of money getting it to work on a five year old AMD? Which is where smaller businesses and researchers looking for a buyout come into play.

At the end of the day, every company will choose to do it faster and cheaper, and nothing about Nvidia hardware fits into either of those categories unless you’re talking about milliseconds of timing, which THEN only fits into a mold of OpenAI’s definition.

Faster is almost always cheaper. There have been decades of research into this and it almost always boils down to it being cheaper to just run at full speed (if you have the ability to) and then turn it off rather than run it longer but at a lower clock speed or with fewer transistors.

And nVidia wouldn’t even let the word “cheaper” see the glory that is Jensen’s latest jacket that costs more than my car does. But if you are somehow claiming that “faster” doesn’t apply to that company then… you know nothing (… Jon Snow).

unless you’re talking about milliseconds of timing

So… its not faster unless you are talking about time?

Also, milliseconds really DO matter when you are trying to make something responsive and already dealing with round trip times with a client. And they add up quite a bit when you are trying to lower your overall footprint so that you only need 4 notes instead of 5.

They don’t ALWAYS add up, depending on your use case. But for the data centers that are selling computers by time? Yeah,. time matters.

So I will just repeat this: Your use case is not everyone’s use case.

I mean…I can shut this down pretty simply. Nvidia makes GPUs that are currently used as a blunt force tool, which is dumb, and now that the grift has been blown, OpenAI, Anthropic, Meta, and all the others trying to make a business center around a really simple tooling that is open source, are about to be under so much scrutiny for the cost that everyone will figure out that there are cheaper ways to do this.

Plus AMD, Con Nvidia. It’s really simple.

Ah. Apologies for trying to have a technical conversation with you.

I’m way behind on the hardware at this point.

Are you saying that AMD is moving toward an FPGA chip on GPU products?

While I see the appeal - that’s going to dramatically increase cost to the end user.

No.

GPU is good for graphics. That’s what is designed and built for. It just so happens to be good at dealing with programmatic neural network tasks because of parallelism.

FPGA is fully programmable to do whatever you want, and reprogram on the fly. Pretty perfect for reducing costs if you have a platform that does things like audio processing, then video processing, or deep learning, especially in cloud environments. Instead of spinning up a bunch of expensive single-phroose instances, you can just spin up one FPGA type, and reprogram on the fly to best perform on the work at hand when the code starts up. Simple.

AMD bought Xilinx in 2019 when they were still a fledgling company because they realized the benefit of this. They are now selling mass amounts of these chips to data centers everywhere. It’s also what the XDNA coprocessors on all the newer Ryzen chips are built on, so home users have access to an FPGA chip right there. It’s efficient, cheaper to make than a GPU, and can perform better on lots of non-graphic tasks than GPUs without all the massive power and cooling needs. Nvidia has nothing on the roadmap to even compete, and they’re about to find out what a stupid mistake that is.

I remember Xilinx from way back in the 90s when I was taking my EE degree, so they were hardly a fledgling in 2019.

Not disputing your overall point, just that detail because it stood out for me since Xilinx is a name I remember well, mostly because it’s unusual.

They were kind of pioneering the space, but about to collapse. AMD did good by scooping them up.

FPGAs have been a thing for ages.

If I remember it correctly (I learned this stuff 3 decades ago) they were basically an improvement on logic circuits without clocks (think stuff like NAND and XOR gates - digital signals just go in and the result comes out on the other side with no delay beyond that caused by analog elements such as parasitical inductances and capacitances, so without waiting for a clock transition).

The thing is, back then clocking of digital circuits really took off (because it’s WAY simpler to have things done one stage at a time with a clock synchronizing when results are read from one stage and sent to the next stage, since different gates have different delays and so making sure results are only read after the slowest path is done is complicated) so all CPU and GPU architecture nowadays are based on having a clock, with clock transitions dictating things like when is each step of processing a CPU/GPU instruction started.

Circuits without clocks have the capability of being way faster than circuits with clocks if you can manage the problem of different digital elements having different delays in producing results I think what we’re seeing here is a revival of using circuits without clocks (or at least with blocks of logic done between clock transitions which are much longer and more complex than the processing of a single GPU instruction).

Yes, but I’m not sure what your argument is here.

Least resistance to an outcome (in this case whatever you program it to do) is faster.

Applicable to waterfall flows, FPGA makes absolute sense for the neural networks as they operate now.

I’m confused on your argument against this and why GPU is better. The benchmarks are out in the world, go look them up.

I’m not making an argument against it, just clarifying were it sits as technology.

As I see it, it’s like electric cars - a technology that was overtaken by something else in the early days when that domain was starting even though it was the first to come out (the first cars were electric and the ICE engine was invented later) and which has now a chance to be successful again because many other things have changed in the meanwhile and we’re a lot closes to the limits of the tech that did got widely adopted back in the early days.

It actually makes a lot of sense to improve the speed of what programming can do by getting it to be capable of also work outside the step-by-step instruction execution straight-jacked which is the CPU/GPU clock.

Is XDNA actually an FPGA? My understanding was that it’s an ASIC implementation of the Xilinx NPU IP. You can’t arbitrarily modify it.

Yep

Huh. Everything I’m reading seems to imply it’s more like a DSP ASIC than an FPGA (even down to the fact that it’s a VLIW processor) but maybe that’s wrong.

I’m curious what kind of work you do that’s led you to this conclusion about FPGAs. I’m guessing you specifically use FPGAs for this task in your work? I’d love to hear about what kinds of ops you specifically find speedups in. I can imagine many exist, as otherwise there wouldn’t be a need for features like tensor cores and transformer acceleration on the latest NVIDIA GPUs (since obviously these features must exploit some inefficiency in GPGPU architectures, up to limits in memory bandwidth of course), but also I wonder how much benefit you can get since in practice a lot of features end up limited by memory bandwidth, and unless you have a gigantic FPGA I imagine this is going to be an issue there as well.